ℹ️ Skipped - page is already crawled

| Filter | Status | Condition | Details |

|---|---|---|---|

| HTTP status | PASS | download_http_code = 200 | HTTP 200 |

| Age cutoff | PASS | download_stamp > now() - 6 MONTH | 0.5 months ago |

| History drop | PASS | isNull(history_drop_reason) | No drop reason |

| Spam/ban | PASS | fh_dont_index != 1 AND ml_spam_score = 0 | ml_spam_score=0 |

| Canonical | PASS | meta_canonical IS NULL OR = '' OR = src_unparsed | Not set |

| Property | Value | |||||||||

|---|---|---|---|---|---|---|---|---|---|---|

| URL | https://leimao.github.io/blog/Python-Concurrency-High-Level/ | |||||||||

| Last Crawled | 2026-04-07 18:00:34 (15 days ago) | |||||||||

| First Indexed | 2020-08-02 16:54:14 (5 years ago) | |||||||||

| HTTP Status Code | 200 | |||||||||

| Content | ||||||||||

| Meta Title | Multiprocessing VS Threading VS AsyncIO in Python - Lei Mao's Log Book | |||||||||

| Meta Description | Understand Python Concurrency from High-Level | |||||||||

| Meta Canonical | null | |||||||||

| Boilerpipe Text | Introduction

In modern computer programming, concurrency is often required to accelerate solving a problem. In Python programming, we usually have the three library options to achieve concurrency,

multiprocessing

,

threading

, and

asyncio

. Recently, I was aware that as a scripting language Python’s behavior of concurrency has subtle differences compared to the conventional compiled languages such as C/C++.

Real Python

has already given a good

tutorial

with

code examples

on Python concurrency. In this blog post, I would like to discuss the

multiprocessing

,

threading

, and

asyncio

in Python from a high-level, with some additional caveats that the Real Python tutorial has not mentioned. I have also borrowed some good diagrams from their tutorial and the readers should give the credits to them on those illustrations.

CPU-Bound VS I/O-Bound

The problems that our modern computers are trying to solve could be generally categorized as CPU-bound or I/O-bound. Whether the problem is CPU-bound or I/O-bound affects our selection from the concurrency libraries

multiprocessing

,

threading

, and

asyncio

. Of course, in some scenarios, the algorithm design for solving the problem might change the problem from CPU-bound to I/O-bound or vice versa. The concept of CPU-bound and I/O-bound are universal for all programming languages.

CPU-Bound

CPU-bound refers to a condition when the time for it to complete the task is determined principally by the speed of the central processor. The faster clock-rate CPU we have, the higher performance of our program will have.

Single-Process Single-Thread Synchronous for CPU-Bound

Most of single computer programs are CPU-bound. For example, given a list of numbers, computing the sum of all the numbers in the list.

I/O-Bound

I/O bound refers to a condition when the time it takes to complete a computation is determined principally by the period spent waiting for input/output operations to be completed. This is the opposite of a task being CPU bound. Increasing CPU clock-rate will not increase the performance of our program significantly. On the contrary, if we have faster I/O, such as faster memory I/O, hard drive I/O, network I/O, etc, the performance of our program will boost.

Single-Process Single-Thread Synchronous for I/O-Bound

Most of the web service related programs are I/O-bound. For example, given a list of restaurant names, finding out their ratings on Yelp.

Process VS Thread in Python

Process in Python

A

global interpreter lock

(GIL) is a mechanism used in computer-language interpreters to synchronize the execution of threads so that only one native thread can execute at a time. An interpreter that uses GIL always allows exactly one native thread to execute at a time, even if run on a multi-core processor. Note that the native thread here is the number of threads in the physical CPU core, instead of the thread concept in the programming languages.

Because Python interpreter uses GIL, a single-process Python program could only use one native thread during execution. That means single-process Python program could not utilize CPU more than 100% (we define the full utilization of a native thread to be 100%) regardless whether it is single-process single-thread or single-process multi-thread. Conventional compiled programming languages, such as C/C++, do not have interpreter, not even mention GIL. Therefore, for a single-process multi-thread C/C++ program, it could utilize many CPU cores and many native threads, and the CPU utilization could be greater than 100%.

Therefore, for a CPU-bound task in Python, we would have to write multi-process Python program to maximize its performance.

Thread in Python

Because a single-process Python could only use one CPU native thread. No matter how many threads were used in a single-process Python program, a single-process multi-thread Python program could only achieve at most 100% CPU utilization.

Therefore, for a CPU-bound task in Python, single-process multi-thread Python program would not improve the performance. However, this does not mean multi-thread is useless in Python. For a I/O-bound task in Python, multi-thread could be used to improve the program performance.

Multiprocessing VS Threading VS AsyncIO in Python

Multiprocessing

Using Python

multiprocessing

, we are able to run a Python using multiple processes. In principle, a multi-process Python program could fully utilize all the CPU cores and native threads available, by creating multiple Python interpreters on many native threads. Because all the processes are independent to each other, and they don’t share memory. To do collaborative tasks in Python using

multiprocessing

, it requires to use the API provided the operating system. Therefore, there will be slightly large overhead.

Multi-Process for CPU-Bound

Therefore, for a CPU-bound task in Python,

multiprocessing

would be a perfect library to use to maximize the performance.

Threading

Using Python

threading

, we are able to make better use of the CPU sitting idle when waiting for the I/O. By overlapping the waiting time for requests, we are able to improve the performance. In addition, because all the threads share the same memory, to do collaborative tasks in Python using

threading

, we would have to be careful and use locks when necessary. Lock and unlock make sure that only one thread could write to memory at one time, but this will also introduce some overhead. Note that the threads we discussed here are different to the native threads in CPU core. The number of native threads in CPU core is usually 2 nowadays, but the number of threads in a single-process Python program could be much larger than 2.

Single-Process Multi-Thread for I/O-Bound

Therefore, for a I/O-bound task in Python,

threading

could be a good library candidate to use to maximize the performance.

It should also be noted that all the threads are in a pool and there is an executer from the operating system managing the threads deciding who to run and when to run. This can be a short-coming of

threading

because the operating system actually knows about each thread and can interrupt it at any time to start running a different thread. This is called pre-emptive multitasking since the operating system can pre-empt your thread to make the switch.

AsyncIO

Given

threading

is using multi-thread to maximize the performance of a I/O-bound task in Python, we wonder if using multi-thread is necessary. The answer is no, if you know when to switch the tasks. For example, for each thread in a Python program using

threading

, it will really stay idle between the request is sent and the result is returned. If somehow a thread could know the time I/O request has been sent, it could switch to do another task, without staying idle, and one thread should be sufficient to manage all these tasks. Without the thread management overhead, the execution should be faster for a I/O-bound task. Obviously,

threading

could not do it, but we have

asyncio

.

Using Python

asyncio

, we are also able to make better use of the CPU sitting idle when waiting for the I/O. What’s different to

threading

is that,

asyncio

is single-process and single-thread. There is an event loop in

asyncio

which routinely measure the progress of the tasks. If the event loop has measured any progress, it would schedule another task for execution, therefore, minimizing the time spent on waiting I/O. This is also called cooperative multitasking. The tasks must cooperate by announcing when they are ready to be switched out.

Single-Process Single-Thread Asynchronous for I/O-Bound

The short-coming of

asyncio

is that the even loop would not know what are the progresses if we don’t tell it. This requires some additional effort when we write the programs using

asyncio

.

Summary

Concurrency Type

Features

Use Criteria

Metaphor

Multiprocessing

Multiple processes, high CPU utilization.

CPU-bound

We have ten kitchens, ten chefs, ten dishes to cook.

Threading

Single process, multiple threads, pre-emptive multitasking, OS decides task switching.

Fast I/O-bound

We have one kitchen, ten chefs, ten dishes to cook. The kitchen is crowded when the ten chefs are present together.

AsyncIO

Single process, single thread, cooperative multitasking, tasks cooperatively decide switching.

Slow I/O-bound

We have one kitchen, one chef, ten dishes to cook.

Caveats

HTOP vs TOP

htop

would sometimes misinterpret multi-thread Python programs as multi-process programs, as it would show multiple

PID

s for the Python program.

top

does not have this problem. On StackOverflow, there is also such a

observation

.

References

Speed Up Your Python Program With Concurrency

Async Python: The Different Forms of Concurrency | |||||||||

| Markdown | [](https://leimao.github.io/)

[Lei Mao's Log Book](https://leimao.github.io/)[Curriculum](https://leimao.github.io/curriculum)[Blog](https://leimao.github.io/blog)[Articles](https://leimao.github.io/article)[Projects](https://leimao.github.io/project)[Publications](https://leimao.github.io/publication)[Readings](https://leimao.github.io/reading)[Life](https://leimao.github.io/life)[Essay](https://leimao.github.io/essay)[Photography](https://leimao.github.io/photography)[Archives](https://leimao.github.io/archives)[Categories](https://leimao.github.io/categories)[Tags](https://leimao.github.io/tags)[FAQs](https://leimao.github.io/faq)

# Multiprocessing VS Threading VS AsyncIO in Python

07-11-2020

07-11-2020

[blog](https://leimao.github.io/blog/) 11 minutes read (About 1666 words) visits

## Introduction

In modern computer programming, concurrency is often required to accelerate solving a problem. In Python programming, we usually have the three library options to achieve concurrency, [`multiprocessing`](https://docs.python.org/3/library/multiprocessing.html), [`threading`](https://docs.python.org/3/library/threading.html), and [`asyncio`](https://docs.python.org/3/library/asyncio.html). Recently, I was aware that as a scripting language Python’s behavior of concurrency has subtle differences compared to the conventional compiled languages such as C/C++.

[Real Python](https://realpython.com/) has already given a good [tutorial](https://realpython.com/python-concurrency/) with [code examples](https://github.com/realpython/materials/tree/master/concurrency-overview) on Python concurrency. In this blog post, I would like to discuss the `multiprocessing`, `threading`, and `asyncio` in Python from a high-level, with some additional caveats that the Real Python tutorial has not mentioned. I have also borrowed some good diagrams from their tutorial and the readers should give the credits to them on those illustrations.

## CPU-Bound VS I/O-Bound

The problems that our modern computers are trying to solve could be generally categorized as CPU-bound or I/O-bound. Whether the problem is CPU-bound or I/O-bound affects our selection from the concurrency libraries `multiprocessing`, `threading`, and `asyncio`. Of course, in some scenarios, the algorithm design for solving the problem might change the problem from CPU-bound to I/O-bound or vice versa. The concept of CPU-bound and I/O-bound are universal for all programming languages.

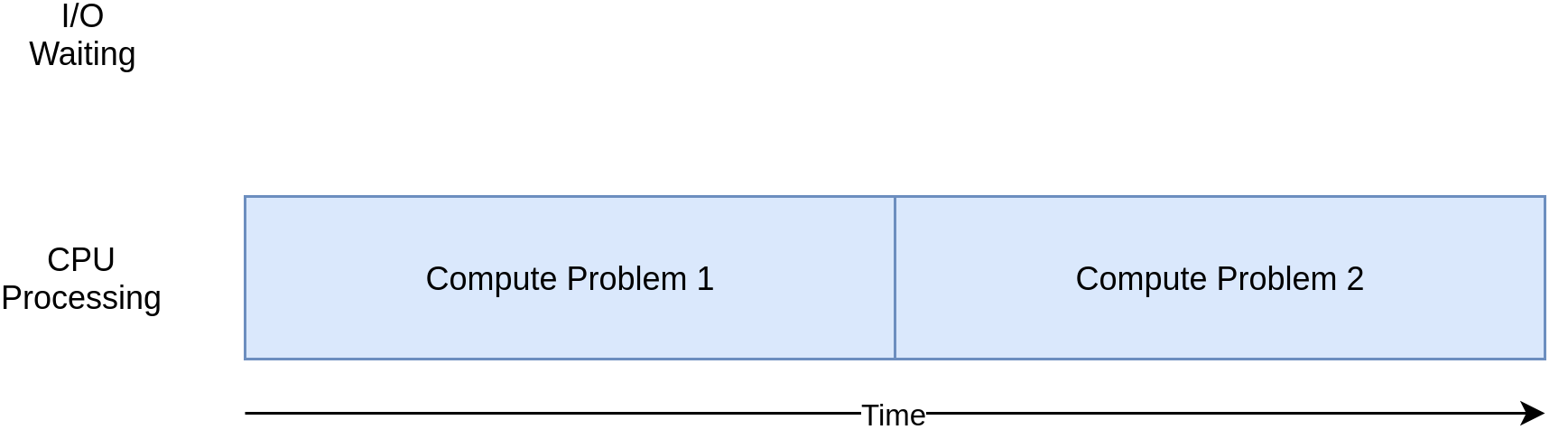

### CPU-Bound

CPU-bound refers to a condition when the time for it to complete the task is determined principally by the speed of the central processor. The faster clock-rate CPU we have, the higher performance of our program will have.

[Single-Process Single-Thread Synchronous for CPU-Bound](https://leimao.github.io/images/blog/2020-07-11-Python-Concurrency-High-Level/CPUBound.webp)

Most of single computer programs are CPU-bound. For example, given a list of numbers, computing the sum of all the numbers in the list.

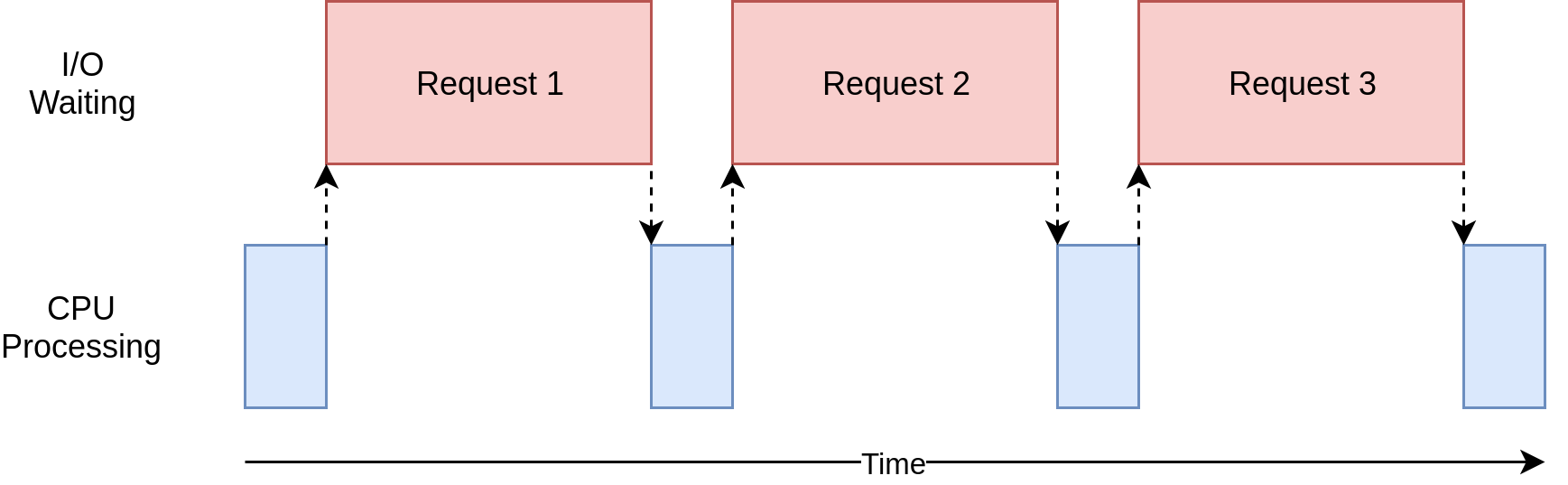

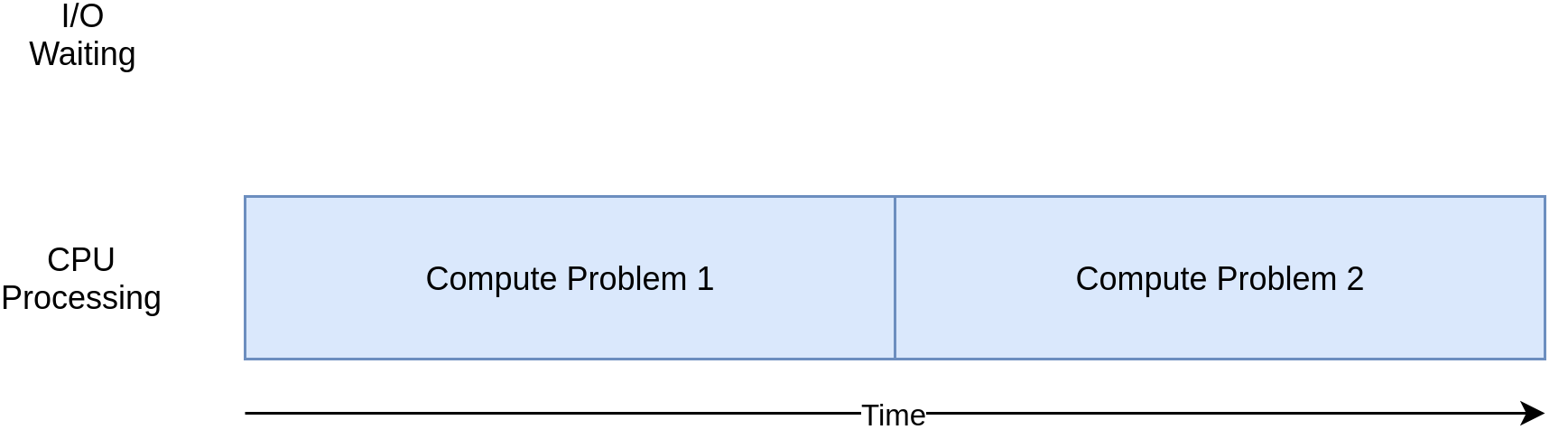

### I/O-Bound

I/O bound refers to a condition when the time it takes to complete a computation is determined principally by the period spent waiting for input/output operations to be completed. This is the opposite of a task being CPU bound. Increasing CPU clock-rate will not increase the performance of our program significantly. On the contrary, if we have faster I/O, such as faster memory I/O, hard drive I/O, network I/O, etc, the performance of our program will boost.

[Single-Process Single-Thread Synchronous for I/O-Bound](https://leimao.github.io/images/blog/2020-07-11-Python-Concurrency-High-Level/IOBound.webp)

Most of the web service related programs are I/O-bound. For example, given a list of restaurant names, finding out their ratings on Yelp.

## Process VS Thread in Python

### Process in Python

A [global interpreter lock](https://en.wikipedia.org/wiki/Global_interpreter_lock) (GIL) is a mechanism used in computer-language interpreters to synchronize the execution of threads so that only one native thread can execute at a time. An interpreter that uses GIL always allows exactly one native thread to execute at a time, even if run on a multi-core processor. Note that the native thread here is the number of threads in the physical CPU core, instead of the thread concept in the programming languages.

Because Python interpreter uses GIL, a single-process Python program could only use one native thread during execution. That means single-process Python program could not utilize CPU more than 100% (we define the full utilization of a native thread to be 100%) regardless whether it is single-process single-thread or single-process multi-thread. Conventional compiled programming languages, such as C/C++, do not have interpreter, not even mention GIL. Therefore, for a single-process multi-thread C/C++ program, it could utilize many CPU cores and many native threads, and the CPU utilization could be greater than 100%.

Therefore, for a CPU-bound task in Python, we would have to write multi-process Python program to maximize its performance.

### Thread in Python

Because a single-process Python could only use one CPU native thread. No matter how many threads were used in a single-process Python program, a single-process multi-thread Python program could only achieve at most 100% CPU utilization.

Therefore, for a CPU-bound task in Python, single-process multi-thread Python program would not improve the performance. However, this does not mean multi-thread is useless in Python. For a I/O-bound task in Python, multi-thread could be used to improve the program performance.

## Multiprocessing VS Threading VS AsyncIO in Python

### Multiprocessing

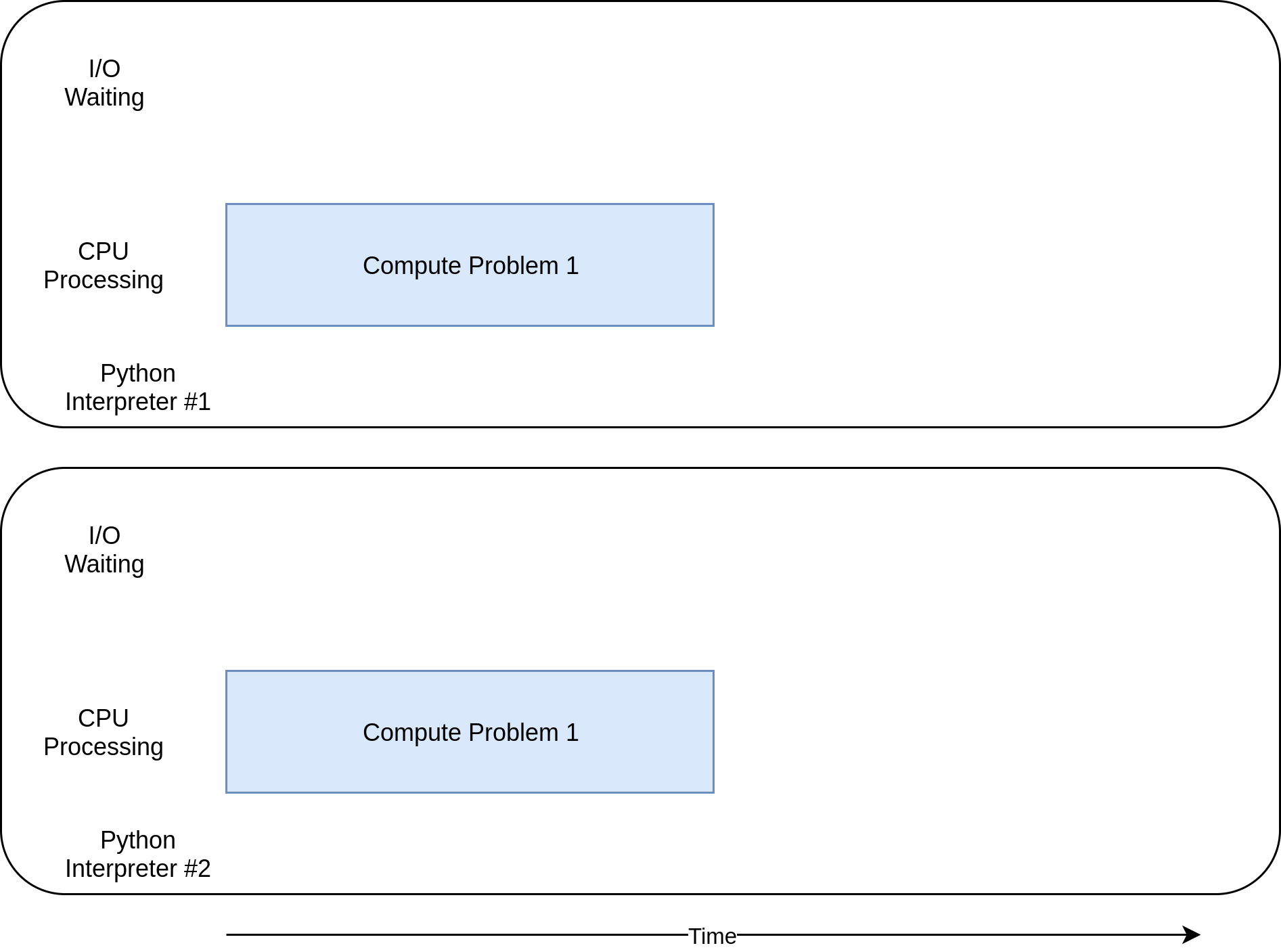

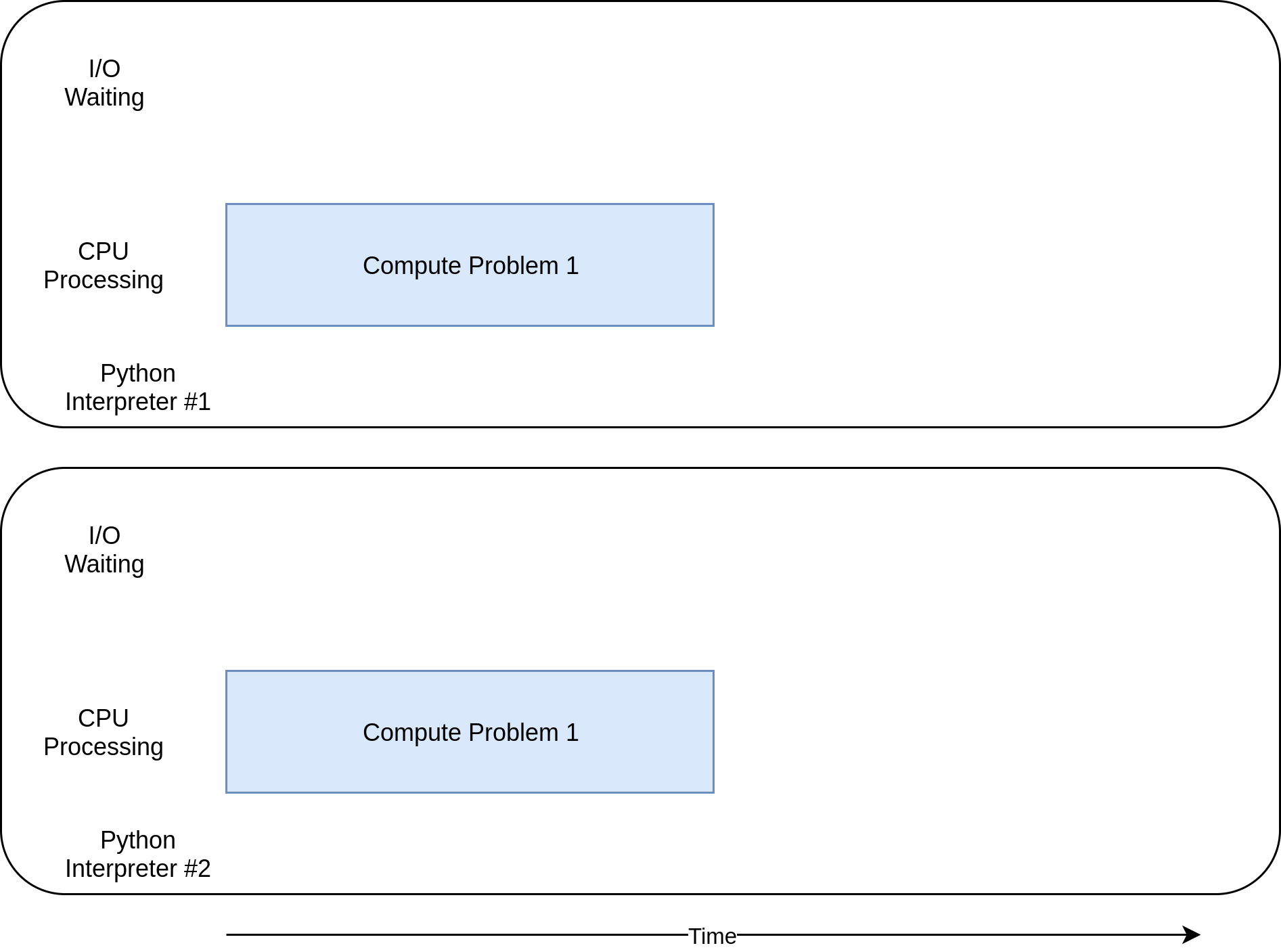

Using Python `multiprocessing`, we are able to run a Python using multiple processes. In principle, a multi-process Python program could fully utilize all the CPU cores and native threads available, by creating multiple Python interpreters on many native threads. Because all the processes are independent to each other, and they don’t share memory. To do collaborative tasks in Python using `multiprocessing`, it requires to use the API provided the operating system. Therefore, there will be slightly large overhead.

[Multi-Process for CPU-Bound](https://leimao.github.io/images/blog/2020-07-11-Python-Concurrency-High-Level/CPUMP.webp)

Therefore, for a CPU-bound task in Python, `multiprocessing` would be a perfect library to use to maximize the performance.

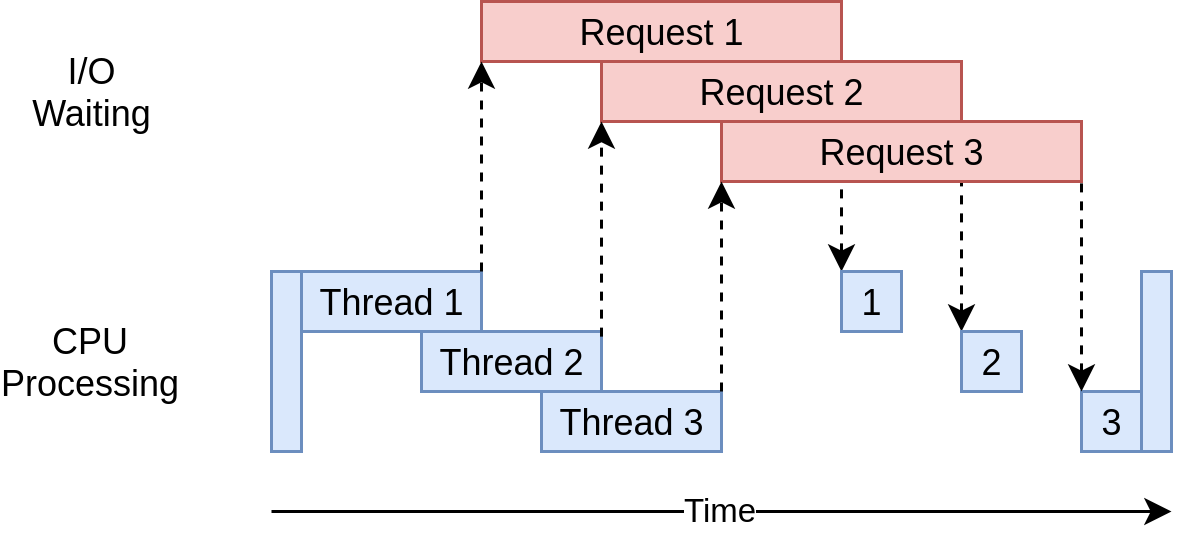

### Threading

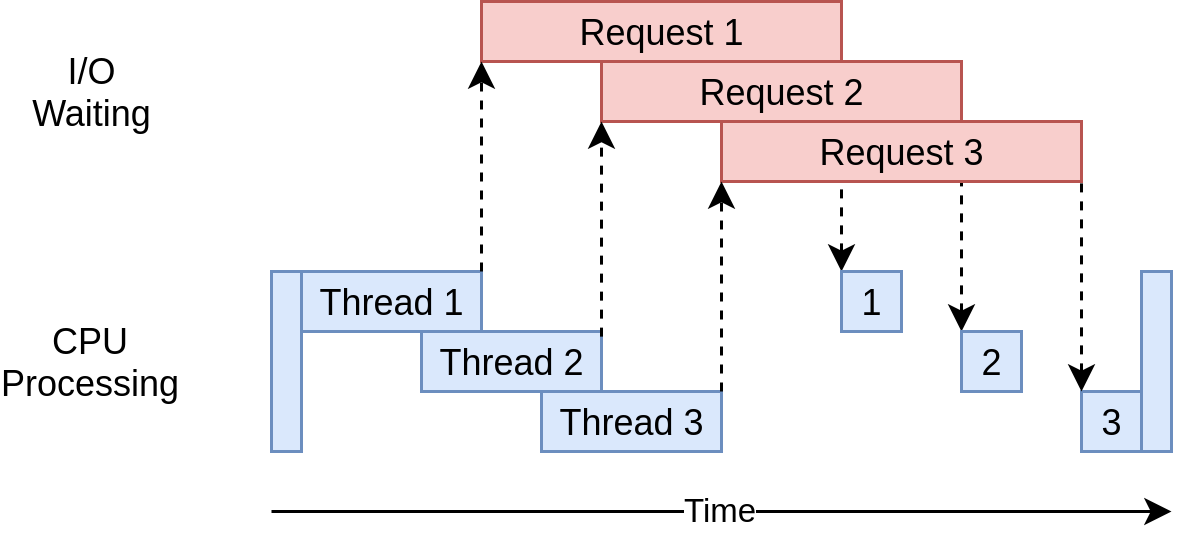

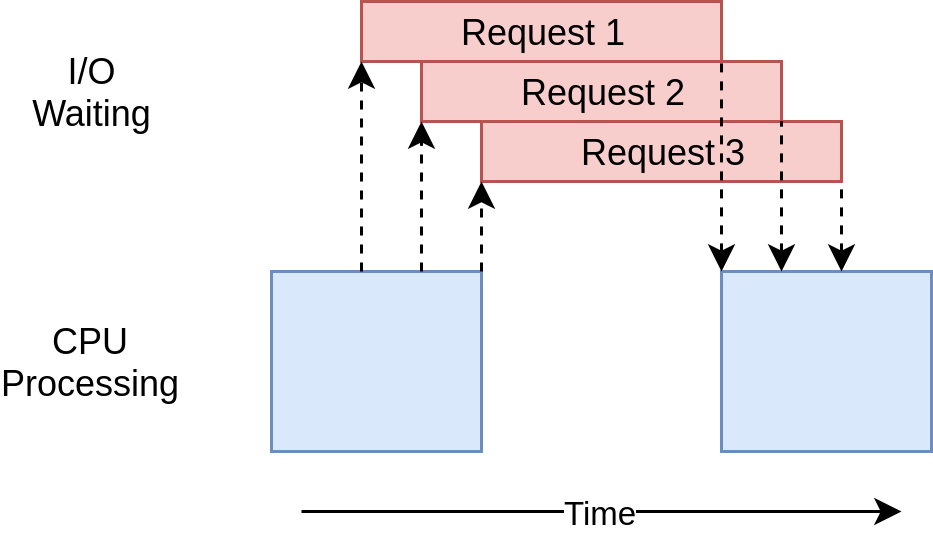

Using Python `threading`, we are able to make better use of the CPU sitting idle when waiting for the I/O. By overlapping the waiting time for requests, we are able to improve the performance. In addition, because all the threads share the same memory, to do collaborative tasks in Python using `threading`, we would have to be careful and use locks when necessary. Lock and unlock make sure that only one thread could write to memory at one time, but this will also introduce some overhead. Note that the threads we discussed here are different to the native threads in CPU core. The number of native threads in CPU core is usually 2 nowadays, but the number of threads in a single-process Python program could be much larger than 2.

[Single-Process Multi-Thread for I/O-Bound](https://leimao.github.io/images/blog/2020-07-11-Python-Concurrency-High-Level/Threading.webp)

Therefore, for a I/O-bound task in Python, `threading` could be a good library candidate to use to maximize the performance.

It should also be noted that all the threads are in a pool and there is an executer from the operating system managing the threads deciding who to run and when to run. This can be a short-coming of `threading` because the operating system actually knows about each thread and can interrupt it at any time to start running a different thread. This is called pre-emptive multitasking since the operating system can pre-empt your thread to make the switch.

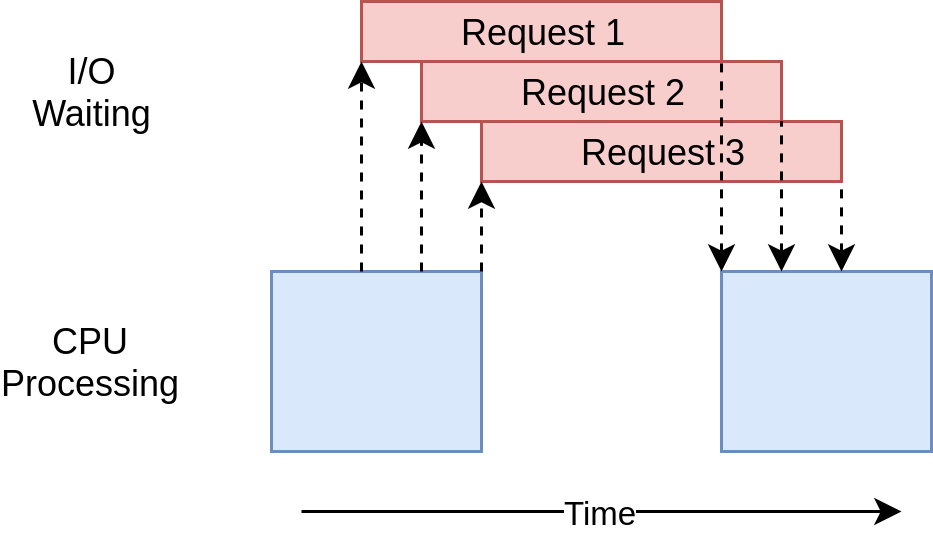

### AsyncIO

Given `threading` is using multi-thread to maximize the performance of a I/O-bound task in Python, we wonder if using multi-thread is necessary. The answer is no, if you know when to switch the tasks. For example, for each thread in a Python program using `threading`, it will really stay idle between the request is sent and the result is returned. If somehow a thread could know the time I/O request has been sent, it could switch to do another task, without staying idle, and one thread should be sufficient to manage all these tasks. Without the thread management overhead, the execution should be faster for a I/O-bound task. Obviously, `threading` could not do it, but we have `asyncio`.

Using Python `asyncio`, we are also able to make better use of the CPU sitting idle when waiting for the I/O. What’s different to `threading` is that, `asyncio` is single-process and single-thread. There is an event loop in `asyncio` which routinely measure the progress of the tasks. If the event loop has measured any progress, it would schedule another task for execution, therefore, minimizing the time spent on waiting I/O. This is also called cooperative multitasking. The tasks must cooperate by announcing when they are ready to be switched out.

[Single-Process Single-Thread Asynchronous for I/O-Bound](https://leimao.github.io/images/blog/2020-07-11-Python-Concurrency-High-Level/Asyncio.webp)

The short-coming of `asyncio` is that the even loop would not know what are the progresses if we don’t tell it. This requires some additional effort when we write the programs using `asyncio`.

## Summary

| Concurrency Type | Features | Use Criteria | Metaphor |

|---|---|---|---|

| Multiprocessing | Multiple processes, high CPU utilization. | CPU-bound | We have ten kitchens, ten chefs, ten dishes to cook. |

| Threading | Single process, multiple threads, pre-emptive multitasking, OS decides task switching. | Fast I/O-bound | We have one kitchen, ten chefs, ten dishes to cook. The kitchen is crowded when the ten chefs are present together. |

| AsyncIO | Single process, single thread, cooperative multitasking, tasks cooperatively decide switching. | Slow I/O-bound | We have one kitchen, one chef, ten dishes to cook. |

## Caveats

### HTOP vs TOP

`htop` would sometimes misinterpret multi-thread Python programs as multi-process programs, as it would show multiple `PID`s for the Python program. `top` does not have this problem. On StackOverflow, there is also such a [observation](https://stackoverflow.com/questions/38544265/multithreaded-python-program-starting-multiple-processes).

## References

- [Speed Up Your Python Program With Concurrency](https://realpython.com/python-concurrency/)

- [Async Python: The Different Forms of Concurrency](http://masnun.rocks/2016/10/06/async-python-the-different-forms-of-concurrency/)

Multiprocessing VS Threading VS AsyncIO in Python

<https://leimao.github.io/blog/Python-Concurrency-High-Level/>

###### Author

Lei Mao

###### Posted on

07-11-2020

###### Updated on

07-11-2020

###### Licensed under

***

[Python,](https://leimao.github.io/tags/Python/)

[Concurrency](https://leimao.github.io/tags/Concurrency/)

[Share](https://www.addtoany.com/share#url=https%3A%2F%2Fleimao.github.io%2Fblog%2FPython-Concurrency-High-Level%2F&title=Multiprocessing%20VS%20Threading%20VS%20AsyncIO%20in%20Python%20-%20Lei%20Mao%27s%20Log%20Book)

[X](https://leimao.github.io/#x)

[LinkedIn](https://leimao.github.io/#linkedin)

[Email](https://leimao.github.io/#email)

[Facebook](https://leimao.github.io/#facebook)

[WeChat](https://leimao.github.io/#wechat)

[Line](https://leimao.github.io/#line)

[Telegram](https://leimao.github.io/#telegram)

[Blogger](https://leimao.github.io/#blogger)

### Like this article? Support the author with

[Paypal]()

[Buy me a coffee](https://www.buymeacoffee.com/leimao)

[Control Python AsyncIO Coroutine Interactively](https://leimao.github.io/blog/Control-Python-AsyncIO-Coroutine-Interactively/)

[Parallel Gzip - Pigz](https://leimao.github.io/blog/Parallel-Gzip-Pigz/)

### Comments

Lei Mao

Artificial Intelligence Machine Learning Computer Science

Menlo Park, California

Posts

[1330](https://leimao.github.io/archives)

Categories

[8](https://leimao.github.io/categories)

Tags

[804](https://leimao.github.io/tags)

[Follow](https://github.com/leimao) [Sponsor](https://github.com/sponsors/leimao)

### Advertisement

### Catalogue

- [1Introduction]()

- [2CPU-Bound VS I/O-Bound]()

- [2\.1CPU-Bound]()

- [2\.2I/O-Bound]()

- [3Process VS Thread in Python]()

- [3\.1Process in Python]()

- [3\.2Thread in Python]()

- [4Multiprocessing VS Threading VS AsyncIO in Python]()

- [4\.1Multiprocessing]()

- [4\.2Threading]()

- [4\.3AsyncIO]()

- [5Summary]()

- [6Caveats]()

- [6\.1HTOP vs TOP]()

- [7References]()

[](https://leimao.github.io/)

© 2017-2026 Lei Mao Powered by [Hexo](https://hexo.io/) & [Icarus](https://github.com/ppoffice/hexo-theme-icarus)

Site UV: Site PV:

[×]()

Posts

[Tools IntroductionThis is a memo of the tools that I commonly use but often forget where to find. Online C](https://leimao.github.io/miscellaneous/tools/) [MOOC Certificates IntroductionMOOC, massive open online course, is an online course aimed at unlimited participation a](https://leimao.github.io/miscellaneous/mooc/) [Stephen Marsland - Machine Learning Book 2nd Ed IntroductionI purchased and read Stephen Marsland’s machine learning textbook “Machine Learning: An](https://leimao.github.io/reading/Stephen-Marsland-Book-Machine-Learning-2nd/) [John Carreyrou - Bad Blood IntroductionI have two degrees in life science related disciplines, however, I did not know the exis](https://leimao.github.io/reading/John-Carreyrou-Bad-Blood/) [黄易 - 寻秦记 引言现在回想起来,自从小学毕业之后我就没看过任何长篇小说了。金庸和古龙的小说也都是在读小学时因为比较闲才看完的。之后虽然没有再读过长篇小说,但是长篇小说改编的电视剧,游戏或者漫画等却接触过不少,而黄易](https://leimao.github.io/reading/Wong-Yik-A-Step-Into-The-Past/)

Pages

[Lei Mao Who am IMy name is Lei Mao, and I am a Systems Software Engineer at Meta. My research and engineerin](https://leimao.github.io/) [Curriculum Vitae CareerMeta (October, 2025 - Now)I am getting started as a Systems Software Engineer at Meta at Menlo](https://leimao.github.io/curriculum/) [FAQs Frequently Asked QuestionsHow do I follow and get updates from this website?The best way to follow a](https://leimao.github.io/faq/) [Publications Machine Learning @ NVIDIAConference Articles Advancing Weight and Channel Sparsification with Enhanc](https://leimao.github.io/publication/) [(Untitled) Name City State Rating California Botanic Garden Claremont CA 4.7 Alcatraz City Cruises San Francisc](https://leimao.github.io/downloads/blog/2024-10-30-Discover-Go/museum_addresses)

Categories

[miscellaneous (miscellaneous)](https://leimao.github.io/miscellaneous/) [reading (reading)](https://leimao.github.io/reading/) [project (project)](https://leimao.github.io/project/) [article (article)](https://leimao.github.io/article/) [blog (blog)](https://leimao.github.io/blog/)

Tags

[Tool (Tool)](https://leimao.github.io/tags/Tool/) [MOOC (MOOC)](https://leimao.github.io/tags/MOOC/) [Machine Learning (Machine-Learning)](https://leimao.github.io/tags/Machine-Learning/) [Biotechnology (Biotechnology)](https://leimao.github.io/tags/Biotechnology/) [Wuxia (Wuxia)](https://leimao.github.io/tags/Wuxia/)

✓

Thanks for sharing\!

[AddToAny](https://www.addtoany.com/ "Share Buttons")

[More…](https://leimao.github.io/blog/Python-Concurrency-High-Level/#addtoany "Show all") | |||||||||

| Readable Markdown | ## Introduction

In modern computer programming, concurrency is often required to accelerate solving a problem. In Python programming, we usually have the three library options to achieve concurrency, [`multiprocessing`](https://docs.python.org/3/library/multiprocessing.html), [`threading`](https://docs.python.org/3/library/threading.html), and [`asyncio`](https://docs.python.org/3/library/asyncio.html). Recently, I was aware that as a scripting language Python’s behavior of concurrency has subtle differences compared to the conventional compiled languages such as C/C++.

[Real Python](https://realpython.com/) has already given a good [tutorial](https://realpython.com/python-concurrency/) with [code examples](https://github.com/realpython/materials/tree/master/concurrency-overview) on Python concurrency. In this blog post, I would like to discuss the `multiprocessing`, `threading`, and `asyncio` in Python from a high-level, with some additional caveats that the Real Python tutorial has not mentioned. I have also borrowed some good diagrams from their tutorial and the readers should give the credits to them on those illustrations.

## CPU-Bound VS I/O-Bound

The problems that our modern computers are trying to solve could be generally categorized as CPU-bound or I/O-bound. Whether the problem is CPU-bound or I/O-bound affects our selection from the concurrency libraries `multiprocessing`, `threading`, and `asyncio`. Of course, in some scenarios, the algorithm design for solving the problem might change the problem from CPU-bound to I/O-bound or vice versa. The concept of CPU-bound and I/O-bound are universal for all programming languages.

### CPU-Bound

CPU-bound refers to a condition when the time for it to complete the task is determined principally by the speed of the central processor. The faster clock-rate CPU we have, the higher performance of our program will have.

[Single-Process Single-Thread Synchronous for CPU-Bound](https://leimao.github.io/images/blog/2020-07-11-Python-Concurrency-High-Level/CPUBound.webp)

Most of single computer programs are CPU-bound. For example, given a list of numbers, computing the sum of all the numbers in the list.

### I/O-Bound

I/O bound refers to a condition when the time it takes to complete a computation is determined principally by the period spent waiting for input/output operations to be completed. This is the opposite of a task being CPU bound. Increasing CPU clock-rate will not increase the performance of our program significantly. On the contrary, if we have faster I/O, such as faster memory I/O, hard drive I/O, network I/O, etc, the performance of our program will boost.

[Single-Process Single-Thread Synchronous for I/O-Bound](https://leimao.github.io/images/blog/2020-07-11-Python-Concurrency-High-Level/IOBound.webp)

Most of the web service related programs are I/O-bound. For example, given a list of restaurant names, finding out their ratings on Yelp.

## Process VS Thread in Python

### Process in Python

A [global interpreter lock](https://en.wikipedia.org/wiki/Global_interpreter_lock) (GIL) is a mechanism used in computer-language interpreters to synchronize the execution of threads so that only one native thread can execute at a time. An interpreter that uses GIL always allows exactly one native thread to execute at a time, even if run on a multi-core processor. Note that the native thread here is the number of threads in the physical CPU core, instead of the thread concept in the programming languages.

Because Python interpreter uses GIL, a single-process Python program could only use one native thread during execution. That means single-process Python program could not utilize CPU more than 100% (we define the full utilization of a native thread to be 100%) regardless whether it is single-process single-thread or single-process multi-thread. Conventional compiled programming languages, such as C/C++, do not have interpreter, not even mention GIL. Therefore, for a single-process multi-thread C/C++ program, it could utilize many CPU cores and many native threads, and the CPU utilization could be greater than 100%.

Therefore, for a CPU-bound task in Python, we would have to write multi-process Python program to maximize its performance.

### Thread in Python

Because a single-process Python could only use one CPU native thread. No matter how many threads were used in a single-process Python program, a single-process multi-thread Python program could only achieve at most 100% CPU utilization.

Therefore, for a CPU-bound task in Python, single-process multi-thread Python program would not improve the performance. However, this does not mean multi-thread is useless in Python. For a I/O-bound task in Python, multi-thread could be used to improve the program performance.

## Multiprocessing VS Threading VS AsyncIO in Python

### Multiprocessing

Using Python `multiprocessing`, we are able to run a Python using multiple processes. In principle, a multi-process Python program could fully utilize all the CPU cores and native threads available, by creating multiple Python interpreters on many native threads. Because all the processes are independent to each other, and they don’t share memory. To do collaborative tasks in Python using `multiprocessing`, it requires to use the API provided the operating system. Therefore, there will be slightly large overhead.

[Multi-Process for CPU-Bound](https://leimao.github.io/images/blog/2020-07-11-Python-Concurrency-High-Level/CPUMP.webp)

Therefore, for a CPU-bound task in Python, `multiprocessing` would be a perfect library to use to maximize the performance.

### Threading

Using Python `threading`, we are able to make better use of the CPU sitting idle when waiting for the I/O. By overlapping the waiting time for requests, we are able to improve the performance. In addition, because all the threads share the same memory, to do collaborative tasks in Python using `threading`, we would have to be careful and use locks when necessary. Lock and unlock make sure that only one thread could write to memory at one time, but this will also introduce some overhead. Note that the threads we discussed here are different to the native threads in CPU core. The number of native threads in CPU core is usually 2 nowadays, but the number of threads in a single-process Python program could be much larger than 2.

[Single-Process Multi-Thread for I/O-Bound](https://leimao.github.io/images/blog/2020-07-11-Python-Concurrency-High-Level/Threading.webp)

Therefore, for a I/O-bound task in Python, `threading` could be a good library candidate to use to maximize the performance.

It should also be noted that all the threads are in a pool and there is an executer from the operating system managing the threads deciding who to run and when to run. This can be a short-coming of `threading` because the operating system actually knows about each thread and can interrupt it at any time to start running a different thread. This is called pre-emptive multitasking since the operating system can pre-empt your thread to make the switch.

### AsyncIO

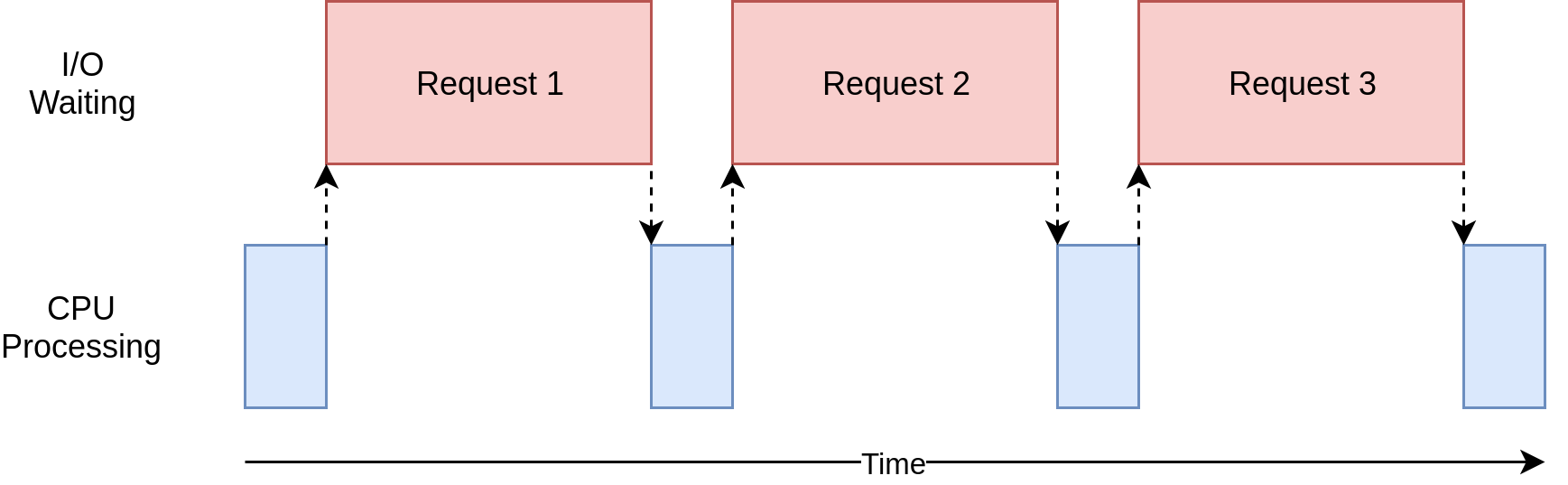

Given `threading` is using multi-thread to maximize the performance of a I/O-bound task in Python, we wonder if using multi-thread is necessary. The answer is no, if you know when to switch the tasks. For example, for each thread in a Python program using `threading`, it will really stay idle between the request is sent and the result is returned. If somehow a thread could know the time I/O request has been sent, it could switch to do another task, without staying idle, and one thread should be sufficient to manage all these tasks. Without the thread management overhead, the execution should be faster for a I/O-bound task. Obviously, `threading` could not do it, but we have `asyncio`.

Using Python `asyncio`, we are also able to make better use of the CPU sitting idle when waiting for the I/O. What’s different to `threading` is that, `asyncio` is single-process and single-thread. There is an event loop in `asyncio` which routinely measure the progress of the tasks. If the event loop has measured any progress, it would schedule another task for execution, therefore, minimizing the time spent on waiting I/O. This is also called cooperative multitasking. The tasks must cooperate by announcing when they are ready to be switched out.

[Single-Process Single-Thread Asynchronous for I/O-Bound](https://leimao.github.io/images/blog/2020-07-11-Python-Concurrency-High-Level/Asyncio.webp)

The short-coming of `asyncio` is that the even loop would not know what are the progresses if we don’t tell it. This requires some additional effort when we write the programs using `asyncio`.

## Summary

| Concurrency Type | Features | Use Criteria | Metaphor |

|---|---|---|---|

| Multiprocessing | Multiple processes, high CPU utilization. | CPU-bound | We have ten kitchens, ten chefs, ten dishes to cook. |

| Threading | Single process, multiple threads, pre-emptive multitasking, OS decides task switching. | Fast I/O-bound | We have one kitchen, ten chefs, ten dishes to cook. The kitchen is crowded when the ten chefs are present together. |

| AsyncIO | Single process, single thread, cooperative multitasking, tasks cooperatively decide switching. | Slow I/O-bound | We have one kitchen, one chef, ten dishes to cook. |

## Caveats

### HTOP vs TOP

`htop` would sometimes misinterpret multi-thread Python programs as multi-process programs, as it would show multiple `PID`s for the Python program. `top` does not have this problem. On StackOverflow, there is also such a [observation](https://stackoverflow.com/questions/38544265/multithreaded-python-program-starting-multiple-processes).

## References

- [Speed Up Your Python Program With Concurrency](https://realpython.com/python-concurrency/)

- [Async Python: The Different Forms of Concurrency](http://masnun.rocks/2016/10/06/async-python-the-different-forms-of-concurrency/) | |||||||||

| ML Classification | ||||||||||

| ML Categories |

Raw JSON{

"/Computers_and_Electronics": 995,

"/Computers_and_Electronics/Programming": 978,

"/Computers_and_Electronics/Programming/Scripting_Languages": 833

} | |||||||||

| ML Page Types |

Raw JSON{

"/Article": 999,

"/Article/Tutorial_or_Guide": 957

} | |||||||||

| ML Intent Types |

Raw JSON{

"Informational": 999

} | |||||||||

| Content Metadata | ||||||||||

| Language | en | |||||||||

| Author | Lei Mao | |||||||||

| Publish Time | 2020-07-11 07:00:00 (5 years ago) | |||||||||

| Original Publish Time | 2020-07-11 07:00:00 (5 years ago) | |||||||||

| Republished | No | |||||||||

| Word Count (Total) | 1,782 | |||||||||

| Word Count (Content) | 1,387 | |||||||||

| Links | ||||||||||

| External Links | 31 | |||||||||

| Internal Links | 50 | |||||||||

| Technical SEO | ||||||||||

| Meta Nofollow | No | |||||||||

| Meta Noarchive | No | |||||||||

| JS Rendered | Yes | |||||||||

| Redirect Target | null | |||||||||

| Performance | ||||||||||

| Download Time (ms) | 50 | |||||||||

| TTFB (ms) | 50 | |||||||||

| Download Size (bytes) | 10,176 | |||||||||

| Shard | 143 (laksa) | |||||||||

| Root Hash | 2566890010099092343 | |||||||||

| Unparsed URL | io,github!leimao,/blog/Python-Concurrency-High-Level/ s443 | |||||||||