ℹ️ Skipped - page is already crawled

| Filter | Status | Condition | Details |

|---|---|---|---|

| HTTP status | PASS | download_http_code = 200 | HTTP 200 |

| Age cutoff | PASS | download_stamp > now() - 6 MONTH | 0.6 months ago (distributed domain, exempt) |

| History drop | PASS | isNull(history_drop_reason) | No drop reason |

| Spam/ban | PASS | fh_dont_index != 1 AND ml_spam_score = 0 | ml_spam_score=0 |

| Canonical | PASS | meta_canonical IS NULL OR = '' OR = src_unparsed | Not set |

| Property | Value | |||||||||

|---|---|---|---|---|---|---|---|---|---|---|

| URL | https://en.wikipedia.org/wiki/Beta_distribution | |||||||||

| Last Crawled | 2026-04-06 13:05:55 (17 days ago) | |||||||||

| First Indexed | 2013-08-09 00:43:11 (12 years ago) | |||||||||

| HTTP Status Code | 200 | |||||||||

| Content | ||||||||||

| Meta Title | Beta distribution - Wikipedia | |||||||||

| Meta Description | null | |||||||||

| Meta Canonical | null | |||||||||

| Boilerpipe Text | Beta

Probability density function

Cumulative distribution function

Notation

Beta(

α

,

β

)

Parameters

α

> 0

shape

(

real

)

β

> 0

shape

(

real

)

Support

or

PDF

where

and

is the

Gamma function

.

CDF

(the

regularized incomplete beta function

)

Mean

(see section:

Geometric mean

)

where

is the

digamma function

Median

Mode

for

α

,

β

> 1

Any value in the domain for

α

=

β

= 1

No mode if

α

<1 or

β

<1. Density diverges

at 0 for

α

≤ 1, and at 1 if

β

≤ 1

Variance

(see

trigamma function

and see section:

Geometric variance

)

Skewness

Excess kurtosis

Entropy

MGF

CF

(see

Confluent hypergeometric function

)

Fisher information

see section:

Fisher information matrix

Method of moments

In

probability theory

and

statistics

, the

beta distribution

is a family of continuous

probability distributions

defined on the interval [0, 1] or (0, 1) in terms of two positive

parameters

, denoted by

alpha

(

α

) and

beta

(

β

), that appear as exponents of the variable and its complement to 1, respectively, and control the

shape

of the distribution.

The beta distribution has been applied to model the behavior of

random variables

limited to intervals of finite length in a wide variety of disciplines. The beta distribution is a suitable model for the random behavior of percentages and proportions.

In

Bayesian inference

, the beta distribution is the

conjugate prior probability distribution

for the

Bernoulli

,

binomial

,

negative binomial

, and

geometric

distributions.

The formulation of the beta distribution discussed here is also known as the

beta distribution of the first kind

, whereas

beta distribution of the second kind

is an alternative name for the

beta prime distribution

. The generalization to multiple variables is called a

Dirichlet distribution

.

Probability density function

[

edit

]

An animation of the beta distribution for different values of its parameters.

The

probability density function

(PDF) of the beta distribution, for

or

, and shape parameters

,

, is a

power function

of the variable

and of its

reflection

as follows:

where

is the

gamma function

. The

beta function

,

, is a

normalization constant

to ensure that the total probability is 1. In the above equations

is a

realization

—an observed value that actually occurred—of a

random variable

.

Several authors, including

N. L. Johnson

and

S. Kotz

,

[

1

]

use the symbols

and

(instead of

and

) for the shape parameters of the beta distribution, reminiscent of the symbols traditionally used for the parameters of the

Bernoulli distribution

, because the beta distribution approaches the Bernoulli distribution in the limit when both shape parameters

and

approach zero.

In the following, a random variable

beta-distributed with parameters

and

will be denoted by:

[

2

]

[

3

]

Other notations for beta-distributed random variables used in the statistical literature are

[

4

]

and

.

[

5

]

Cumulative distribution function

[

edit

]

CDF for symmetric beta distribution vs.

x

and

α

=

β

CDF for skewed beta distribution vs.

x

and

β

= 5

α

The

cumulative distribution function

is

where

is the

incomplete beta function

and

is the

regularized incomplete beta function

.

For positive integers

α

and

β

, the cumulative distribution function of a beta distribution can be expressed in terms of the cumulative distribution function of a

binomial distribution

with

[

6

]

Alternative parameterizations

[

edit

]

Mean and sample size

[

edit

]

The beta distribution may also be reparameterized in terms of its mean

μ

(0 <

μ

< 1)

and the sum of the two shape parameters

ν

=

α

+

β

> 0

(

[

3

]

p. 83). Denoting by αPosterior and βPosterior the shape parameters of the posterior beta distribution resulting from applying Bayes' theorem to a binomial likelihood function and a prior probability, the interpretation of the addition of both shape parameters to be sample size =

ν

=

α

·Posterior +

β

·Posterior is only correct for the Haldane prior probability Beta(0,0). Specifically, for the Bayes (uniform) prior Beta(1,1) the correct interpretation would be sample size =

α

·Posterior +

β

Posterior − 2, or

ν

= (sample size) + 2. For sample size much larger than 2, the difference between these two priors becomes negligible. (See section

Bayesian inference

for further details.)

ν

=

α

+

β

is referred to as the "sample size" of a beta distribution, but one should remember that it is, strictly speaking, the "sample size" of a binomial likelihood function only when using a Haldane Beta(0,0) prior in Bayes' theorem.

This parametrization may be useful in Bayesian parameter estimation. For example, one may administer a test to a number of individuals. If it is assumed that each person's score (0 ≤

θ

≤ 1) is drawn from a population-level beta distribution, then an important statistic is the mean of this population-level distribution. The mean and sample size parameters are related to the shape parameters

α

and

β

via

[

3

]

α

=

μν

,

β

= (1 −

μ

)

ν

Under this

parametrization

, one may place an

uninformative prior

probability over the mean, and a vague prior probability (such as an

exponential

or

gamma distribution

) over the positive reals for the sample size, if they are independent, and prior data and/or beliefs justify it.

Mode and concentration

[

edit

]

Concave

beta distributions, which have

, can be parametrized in terms of mode and "concentration". The mode,

, and concentration,

, can be used to define the usual shape parameters as follows:

[

7

]

For the mode,

, to be well-defined, we need

, or equivalently

. If instead we define the concentration as

, the condition simplifies to

and the beta density at

and

can be written as:

where

directly scales the

sufficient statistics

,

and

. Note also that in the limit,

, the distribution becomes flat.

Solving the system of (coupled) equations given in the above sections as the equations for the mean and the variance of the beta distribution in terms of the original parameters

α

and

β

, one can express the

α

and

β

parameters in terms of the mean (

μ

) and the variance (var):

This

parametrization

of the beta distribution may lead to a more intuitive understanding than the one based on the original parameters

α

and

β

. For example, by expressing the mode, skewness, excess kurtosis and differential entropy in terms of the mean and the variance:

A beta distribution with the two shape parameters

α

and

β

is supported on the range [0,1] or (0,1). It is possible to alter the location and scale of the distribution by introducing two further parameters representing the minimum,

a

, and maximum

c

(

c

>

a

), values of the distribution,

[

1

]

by a linear transformation substituting the non-dimensional variable

x

in terms of the new variable

y

(with support [

a

,

c

] or (

a

,

c

)) and the parameters

a

and

c

:

The

probability density function

of the four parameter beta distribution is equal to the two parameter distribution, scaled by the range (

c

−

a

), (so that the total area under the density curve equals a probability of one), and with the "y" variable shifted and scaled as follows:

That a random variable

Y

is beta-distributed with four parameters

α

,

β

,

a

, and

c

will be denoted by:

Some measures of central location are scaled (by (

c

−

a

)) and shifted (by

a

), as follows:

Note: the geometric mean and harmonic mean cannot be transformed by a linear transformation in the way that the mean, median and mode can.

The shape parameters of

Y

can be written in term of its mean and variance as

The statistical dispersion measures are scaled (they do not need to be shifted because they are already centered on the mean) by the range (

c

−

a

), linearly for the mean deviation and nonlinearly for the variance:

Since the

skewness

and

excess kurtosis

are non-dimensional quantities (as

moments

centered on the mean and normalized by the

standard deviation

), they are independent of the parameters

a

and

c

, and therefore equal to the expressions given above in terms of

X

(with support [0,1] or (0,1)):

Measures of central tendency

[

edit

]

The

mode

of a beta distributed

random variable

X

with

α

,

β

> 1 is the most likely value of the distribution (corresponding to the peak in the PDF), and is given by the following expression:

[

1

]

When both parameters are less than one (

α

,

β

< 1), this is the anti-mode: the lowest point of the probability density curve.

[

8

]

Letting

α

=

β

, the expression for the mode simplifies to 1/2, showing that for

α

=

β

> 1 the mode (resp. anti-mode when

α

,

β

< 1

), is at the center of the distribution: it is symmetric in those cases. See

Shapes

section in this article for a full list of mode cases, for arbitrary values of

α

and

β

. For several of these cases, the maximum value of the density function occurs at one or both ends. In some cases the (maximum) value of the density function occurring at the end is finite. For example, in the case of

α

= 2,

β

= 1 (or

α

= 1,

β

= 2), the density function becomes a

right-triangle distribution

which is finite at both ends. In several other cases there is a

singularity

at one end, where the value of the density function approaches infinity. For example, in the case

α

=

β

= 1/2, the beta distribution simplifies to become the

arcsine distribution

. There is debate among mathematicians about some of these cases and whether the ends (

x

= 0, and

x

= 1) can be called

modes

or not.

[

9

]

[

2

]

Mode for beta distribution for 1 ≤

α

≤ 5 and 1 ≤ β ≤ 5

Whether the ends are part of the

domain

of the density function

Whether a

singularity

can ever be called a

mode

Whether cases with two maxima should be called

bimodal

Median for beta distribution for 0 ≤

α

≤ 5 and 0 ≤

β

≤ 5

(Mean–median) for beta distribution versus alpha and beta from 0 to 2

The median of the beta distribution is the unique real number

for which the

regularized incomplete beta function

. There is no general

closed-form expression

for the

median

of the beta distribution for arbitrary values of

α

and

β

.

Closed-form expressions

for particular values of the parameters

α

and

β

follow:

[

citation needed

]

The following are the limits with one parameter finite (non-zero) and the other approaching these limits:

[

citation needed

]

A reasonable approximation of the value of the median of the beta distribution, for both α and β greater or equal to one, is given by the formula

[

10

]

When

α

,

β

≥ 1, the

relative error

(the

absolute error

divided by the median) in this approximation is less than 4% and for both

α

≥ 2 and

β

≥ 2 it is less than 1%. The

absolute error

divided by the difference between the mean and the mode is similarly small:

Mean for beta distribution for

0 ≤

α

≤ 5

and

0 ≤

β

≤ 5

The

expected value

(mean) (

μ

) of a beta distribution

random variable

X

with two parameters

α

and

β

is a function of only the ratio

β

/

α

of these parameters:

[

1

]

Letting

α

=

β

in the above expression one obtains

μ

= 1/2

, showing that for

α

=

β

the mean is at the center of the distribution: it is symmetric. Also, the following limits can be obtained from the above expression:

Therefore, for

β

/

α

→ 0, or for

α

/

β

→ ∞, the mean is located at the right end,

x

= 1

. For these limit ratios, the beta distribution becomes a one-point

degenerate distribution

with a

Dirac delta function

spike at the right end,

x

= 1

, with probability 1, and zero probability everywhere else. There is 100% probability (absolute certainty) concentrated at the right end,

x

= 1

.

Similarly, for

β

/

α

→ ∞, or for

α

/

β

→ 0, the mean is located at the left end,

x

= 0

. The beta distribution becomes a 1-point

Degenerate distribution

with a

Dirac delta function

spike at the left end,

x

= 0, with probability 1, and zero probability everywhere else. There is 100% probability (absolute certainty) concentrated at the left end,

x

= 0. Following are the limits with one parameter finite (non-zero) and the other approaching these limits:

While for typical unimodal distributions (with centrally located modes, inflexion points at both sides of the mode, and longer tails) (with Beta(

α

,

β

) such that

α

,

β

> 2

) it is known that the sample mean (as an estimate of location) is not as

robust

as the sample median, the opposite is the case for uniform or "U-shaped" bimodal distributions (with Beta(

α

,

β

) such that

α

,

β

≤ 1

), with the modes located at the ends of the distribution. As Mosteller and Tukey remark (

[

11

]

p. 207) "the average of the two extreme observations uses all the sample information. This illustrates how, for short-tailed distributions, the extreme observations should get more weight." By contrast, it follows that the median of "U-shaped" bimodal distributions with modes at the edge of the distribution (with Beta(

α

,

β

) such that

α

,

β

≤ 1

) is not robust, as the sample median drops the extreme sample observations from consideration. A practical application of this occurs for example for

random walks

, since the probability for the time of the last visit to the origin in a random walk is distributed as the

arcsine distribution

Beta(1/2, 1/2):

[

5

]

[

12

]

the mean of a number of

realizations

of a random walk is a much more robust estimator than the median (which is an inappropriate sample measure estimate in this case).

(Mean − GeometricMean) for beta distribution versus

α

and

β

from 0 to 2, showing the asymmetry between

α

and

β

for the geometric mean

Geometric means for beta distribution Purple =

G

(

x

), Yellow =

G

(1 −

x

), smaller values

α

and

β

in front

Geometric means for beta distribution. purple =

G

(

x

), yellow =

G

(1 −

x

), larger values

α

and

β

in front

The logarithm of the

geometric mean

G

X

of a distribution with

random variable

X

is the arithmetic mean of ln(

X

), or, equivalently, its expected value:

For a beta distribution, the expected value integral gives:

where

ψ

is the

digamma function

.

Therefore, the geometric mean of a beta distribution with shape parameters

α

and

β

is the exponential of the digamma functions of

α

and

β

as follows:

While for a beta distribution with equal shape parameters

α

=

β

, it follows that skewness = 0 and mode = mean = median = 1/2, the geometric mean is less than 1/2:

0 <

G

X

< 1/2

. The reason for this is that the logarithmic transformation strongly weights the values of

X

close to zero, as ln(

X

) strongly tends towards negative infinity as

X

approaches zero, while ln(

X

) flattens towards zero as

X

→ 1

.

Along a line

α

=

β

, the following limits apply:

Following are the limits with one parameter finite (non-zero) and the other approaching these limits:

The accompanying plot shows the difference between the mean and the geometric mean for shape parameters

α

and

β

from zero to 2. Besides the fact that the difference between them approaches zero as

α

and

β

approach infinity and that the difference becomes large for values of

α

and

β

approaching zero, one can observe an evident asymmetry of the geometric mean with respect to the shape parameters

α

and

β

. The difference between the geometric mean and the mean is larger for small values of

α

in relation to

β

than when exchanging the magnitudes of

β

and

α

.

N. L.Johnson

and

S. Kotz

[

1

]

suggest the logarithmic approximation to the digamma function

ψ

(

α

) ≈ ln(

α

− 1/2) which results in the following approximation to the geometric mean:

Numerical values for the

relative error

in this approximation follow: [

(

α

=

β

= 1): 9.39%

]; [

(

α

=

β

= 2): 1.29%

]; [

(

α

= 2,

β

= 3): 1.51%

]; [

(

α

= 3,

β

= 2): 0.44%

]; [

(

α

=

β

= 3): 0.51%

]; [

(

α

=

β

= 4): 0.26%

]; [

(

α

= 3,

β

= 4): 0.55%

]; [

(

α

= 4,

β

= 3): 0.24%

].

Similarly, one can calculate the value of shape parameters required for the geometric mean to equal 1/2. Given the value of the parameter

β

, what would be the value of the other parameter,

α

, required for the geometric mean to equal 1/2?. The answer is that (for

β

> 1

), the value of

α

required tends towards

β

+ 1/2

as

β

→ ∞

. For example, all these couples have the same geometric mean of 1/2: [

β

= 1,

α

= 1.4427

], [

β

= 2,

α

= 2.46958

], [

β

= 3,

α

= 3.47943

], [

β

= 4,

α

= 4.48449

], [

β

= 5,

α

= 5.48756

], [

β

= 10,

α

= 10.4938

], [

β

= 100,

α

= 100.499

].

The fundamental property of the geometric mean, which can be proven to be false for any other mean, is

This makes the geometric mean the only correct mean when averaging

normalized

results, that is results that are presented as ratios to reference values.

[

13

]

This is relevant because the beta distribution is a suitable model for the random behavior of percentages and it is particularly suitable to the statistical modelling of proportions. The geometric mean plays a central role in maximum likelihood estimation, see section "Parameter estimation, maximum likelihood." Actually, when performing maximum likelihood estimation, besides the

geometric mean

G

X

based on the random variable X, also another geometric mean appears naturally: the

geometric mean

based on the linear transformation ––

(1 −

X

)

, the mirror-image of

X

, denoted by

G

(1−

X

)

:

Along a line

α

=

β

, the following limits apply:

Following are the limits with one parameter finite (non-zero) and the other approaching these limits:

It has the following approximate value:

Although both

G

X

and

G

1−

X

are asymmetric, in the case that both shape parameters are equal

α

=

β

, the geometric means are equal:

G

X

=

G

(1−

X

)

. This equality follows from the following symmetry displayed between both geometric means:

Harmonic mean for beta distribution for 0 <

α

< 5 and 0 <

β

< 5

Harmonic mean for beta distribution versus

α

and

β

from 0 to 2

Harmonic means for beta distribution Purple =

H

(

X

), Yellow =

H

(1 −

X

), smaller values

α

and

β

in front

Harmonic means for beta distribution: purple =

H

(

X

), yellow =

H

(1 −

X

), larger values

α

and

β

in front

The inverse of the

harmonic mean

(

H

X

) of a distribution with

random variable

X

is the arithmetic mean of 1/

X

, or, equivalently, its expected value. Therefore, the

harmonic mean

(

H

X

) of a beta distribution with shape parameters

α

and

β

is:

The

harmonic mean

(

H

X

) of a beta distribution with

α

< 1 is undefined, because its defining expression is not bounded in [0, 1] for shape parameter

α

less than unity.

Letting

α

=

β

in the above expression one obtains

showing that for

α

=

β

the harmonic mean ranges from 0, for

α

=

β

= 1, to 1/2, for

α

=

β

→ ∞.

Following are the limits with one parameter finite (non-zero) and the other approaching these limits:

The harmonic mean plays a role in maximum likelihood estimation for the four parameter case, in addition to the geometric mean. Actually, when performing maximum likelihood estimation for the four parameter case, besides the harmonic mean

H

X

based on the random variable

X

, also another harmonic mean appears naturally: the harmonic mean based on the linear transformation (1 −

X

), the mirror-image of

X

, denoted by

H

1 −

X

:

The

harmonic mean

(

H

(1 −

X

)

) of a beta distribution with

β

< 1 is undefined, because its defining expression is not bounded in [0, 1] for shape parameter

β

less than unity.

Letting

α

=

β

in the above expression one obtains

showing that for

α

=

β

the harmonic mean ranges from 0, for

α

=

β

= 1, to 1/2, for

α

=

β

→ ∞.

Following are the limits with one parameter finite (non-zero) and the other approaching these limits:

Although both

H

X

and

H

1−

X

are asymmetric, in the case that both shape parameters are equal

α

=

β

, the harmonic means are equal:

H

X

=

H

1−

X

. This equality follows from the following symmetry displayed between both harmonic means:

Measures of statistical dispersion

[

edit

]

The

variance

(the second moment centered on the mean) of a beta distribution

random variable

X

with parameters

α

and

β

is:

[

1

]

[

14

]

Letting

α

=

β

in the above expression one obtains

showing that for

α

=

β

the variance decreases monotonically as

α

=

β

increases. Setting

α

=

β

= 0

in this expression, one finds the maximum variance var(

X

) = 1/4

[

1

]

which only occurs approaching the limit, at

α

=

β

= 0

.

The beta distribution may also be

parametrized

in terms of its mean

μ

(0 <

μ

< 1)

and sample size

ν

=

α

+

β

(

ν

> 0

) (see subsection

Mean and sample size

):

Using this

parametrization

, one can express the variance in terms of the mean

μ

and the sample size

ν

as follows:

Since

ν

=

α

+

β

> 0

, it follows that

var(

X

) <

μ

(1 −

μ

)

.

For a symmetric distribution, the mean is at the middle of the distribution,

μ

= 1/2

, and therefore:

Also, the following limits (with only the noted variable approaching the limit) can be obtained from the above expressions:

Geometric variance and covariance

[

edit

]

log geometric variances vs.

α

and

β

log geometric variances vs.

α

and

β

The logarithm of the geometric variance, ln(var

GX

), of a distribution with

random variable

X

is the second moment of the logarithm of

X

centered on the geometric mean of

X

, ln(

G

X

):

and therefore, the geometric variance is:

In the

Fisher information

matrix, and the curvature of the log

likelihood function

, the logarithm of the geometric variance of the

reflected

variable 1 −

X

and the logarithm of the geometric covariance between

X

and 1 −

X

appear:

For a beta distribution, higher order logarithmic moments can be derived by using the representation of a beta distribution as a proportion of two gamma distributions and differentiating through the integral. They can be expressed in terms of higher order poly-gamma functions. See the section

§ Moments of logarithmically transformed random variables

. The

variance

of the logarithmic variables and

covariance

of ln

X

and ln(1−

X

) are:

where the

trigamma function

, denoted

ψ

1

(

α

), is the second of the

polygamma functions

, and is defined as the derivative of the

digamma function

:

Therefore,

The accompanying plots show the log geometric variances and log geometric covariance versus the shape parameters

α

and

β

. The plots show that the log geometric variances and log geometric covariance are close to zero for shape parameters

α

and

β

greater than 2, and that the log geometric variances rapidly rise in value for shape parameter values

α

and

β

less than unity. The log geometric variances are positive for all values of the shape parameters. The log geometric covariance is negative for all values of the shape parameters, and it reaches large negative values for

α

and

β

less than unity.

Following are the limits with one parameter finite (non-zero) and the other approaching these limits:

Limits with two parameters varying:

Although both ln(var

GX

) and ln(var

G

(1 −

X

)

) are asymmetric, when the shape parameters are equal,

α

=

β

, one has: ln(var

GX

) = ln(var

G

(1−

X

)

). This equality follows from the following symmetry displayed between both log geometric variances:

The log geometric covariance is symmetric:

Mean absolute deviation around the mean

[

edit

]

Ratio of, ean abs.dev. to std.dev. for beta distribution with α and β ranging from 0 to 5

Ratio of mean abs.dev. to std.dev. for beta distribution with mean 0 ≤

μ

≤ 1 and sample size 0 <

ν

≤ 10

The

mean absolute deviation

around the mean for the beta distribution with shape parameters

α

and

β

is:

[

9

]

The mean absolute deviation around the mean is a more

robust

estimator

of

statistical dispersion

than the standard deviation for beta distributions with tails and inflection points at each side of the mode, Beta(

α

,

β

) distributions with

α

,

β

> 2, as it depends on the linear (absolute) deviations rather than the square deviations from the mean. Therefore, the effect of very large deviations from the mean are not as overly weighted.

Using

Stirling's approximation

to the

Gamma function

,

N.L.Johnson

and

S.Kotz

[

1

]

derived the following approximation for values of the shape parameters greater than unity (the relative error for this approximation is only −3.5% for

α

=

β

= 1, and it decreases to zero as

α

→ ∞,

β

→ ∞):

At the limit

α

→ ∞,

β

→ ∞, the ratio of the mean absolute deviation to the standard deviation (for the beta distribution) becomes equal to the ratio of the same measures for the normal distribution:

. For

α

=

β

= 1 this ratio equals

, so that from

α

=

β

= 1 to

α

,

β

→ ∞ the ratio decreases by 8.5%. For

α

=

β

= 0 the standard deviation is exactly equal to the mean absolute deviation around the mean. Therefore, this ratio decreases by 15% from

α

=

β

= 0 to

α

=

β

= 1, and by 25% from

α

=

β

= 0 to

α

,

β

→ ∞ . However, for skewed beta distributions such that

α

→ 0 or

β

→ 0, the ratio of the standard deviation to the mean absolute deviation approaches infinity (although each of them, individually, approaches zero) because the mean absolute deviation approaches zero faster than the standard deviation.

Using the

parametrization

in terms of mean

μ

and sample size

ν

=

α

+

β

> 0:

α

=

μν

,

β

= (1 −

μ

)

ν

one can express the mean

absolute deviation

around the mean in terms of the mean

μ

and the sample size

ν

as follows:

For a symmetric distribution, the mean is at the middle of the distribution,

μ

= 1/2, and therefore:

Also, the following limits (with only the noted variable approaching the limit) can be obtained from the above expressions:

Mean absolute difference

[

edit

]

The

mean absolute difference

for the beta distribution is:

The

Gini coefficient

for the beta distribution is half of the relative mean absolute difference:

Skewness for beta distribution as a function of variance and mean

The

skewness

(the third moment centered on the mean, normalized by the 3/2 power of the variance) of the beta distribution is

[

1

]

Letting

α

=

β

in the above expression one obtains

γ

1

= 0, showing once again that for

α

=

β

the distribution is symmetric and hence the skewness is zero. Positive skew (right-tailed) for

α

<

β

, negative skew (left-tailed) for

α

>

β

.

Using the

parametrization

in terms of mean

μ

and sample size

ν

=

α

+

β

:

one can express the skewness in terms of the mean

μ

and the sample size ν as follows:

The skewness can also be expressed just in terms of the variance

var

and the mean

μ

as follows:

The accompanying plot of skewness as a function of variance and mean shows that maximum variance (1/4) is coupled with zero skewness and the symmetry condition (

μ

= 1/2), and that maximum skewness (positive or negative infinity) occurs when the mean is located at one end or the other, so that the "mass" of the probability distribution is concentrated at the ends (minimum variance).

The following expression for the square of the skewness, in terms of the sample size

ν

=

α

+

β

and the variance var, is useful for the method of moments estimation of four parameters:

This expression correctly gives a skewness of zero for

α

=

β

, since in that case (see

§ Variance

):

.

For the symmetric case (

α

=

β

), skewness = 0 over the whole range, and the following limits apply:

For the asymmetric cases (

α

≠

β

) the following limits (with only the noted variable approaching the limit) can be obtained from the above expressions:

Excess Kurtosis for Beta Distribution as a function of variance and mean

The beta distribution has been applied in acoustic analysis to assess damage to gears, as the kurtosis of the beta distribution has been reported to be a good indicator of the condition of a gear.

[

15

]

Kurtosis has also been used to distinguish the seismic signal generated by a person's footsteps from other signals. As persons or other targets moving on the ground generate continuous signals in the form of seismic waves, one can separate different targets based on the seismic waves they generate. Kurtosis is sensitive to impulsive signals, so it's much more sensitive to the signal generated by human footsteps than other signals generated by vehicles, winds, noise, etc.

[

16

]

Unfortunately, the notation for kurtosis has not been standardized. Kenney and Keeping

[

17

]

use the symbol γ

2

for the

excess kurtosis

, but

Abramowitz and Stegun

[

18

]

use different terminology. To prevent confusion

[

19

]

between kurtosis (the fourth moment centered on the mean, normalized by the square of the variance) and excess kurtosis, when using symbols, they will be spelled out as follows:

[

9

]

[

20

]

Letting

α

=

β

in the above expression one obtains

Therefore, for symmetric beta distributions, the excess kurtosis is negative, increasing from a minimum value of −2 at the limit as {

α

=

β

} → 0, and approaching a maximum value of zero as {

α

=

β

} → ∞. The value of −2 is the minimum value of excess kurtosis that any distribution (not just beta distributions, but any distribution of any possible kind) can ever achieve. This minimum value is reached when all the probability density is entirely concentrated at each end

x

= 0 and

x

= 1, with nothing in between: a 2-point

Bernoulli distribution

with equal probability 1/2 at each end (a coin toss: see section below "Kurtosis bounded by the square of the skewness" for further discussion). The description of

kurtosis

as a measure of the "potential outliers" (or "potential rare, extreme values") of the probability distribution, is correct for all distributions including the beta distribution. When rare, extreme values can occur in the beta distribution, the higher its kurtosis; otherwise, the kurtosis is lower. For

α

≠

β

, skewed beta distributions, the excess kurtosis can reach unlimited positive values (particularly for

α

→ 0 for finite

β

, or for

β

→ 0 for finite

α

) because the side away from the mode will produce occasional extreme values. Minimum kurtosis takes place when the mass density is concentrated equally at each end (and therefore the mean is at the center), and there is no probability mass density in between the ends.

Using the

parametrization

in terms of mean

μ

and sample size

ν

=

α

+

β

:

one can express the excess kurtosis in terms of the mean

μ

and the sample size

ν

as follows:

The excess kurtosis can also be expressed in terms of just the following two parameters: the variance var, and the sample size

ν

as follows:

and, in terms of the variance

var

and the mean

μ

as follows:

The plot of excess kurtosis as a function of the variance and the mean shows that the minimum value of the excess kurtosis (−2, which is the minimum possible value for excess kurtosis for any distribution) is intimately coupled with the maximum value of variance (1/4) and the symmetry condition: the mean occurring at the midpoint (

μ

= 1/2). This occurs for the symmetric case of

α

=

β

= 0, with zero skewness. At the limit, this is the 2 point

Bernoulli distribution

with equal probability 1/2 at each

Dirac delta function

end

x

= 0 and

x

= 1 and zero probability everywhere else. (A coin toss: one face of the coin being

x

= 0 and the other face being

x

= 1.) Variance is maximum because the distribution is bimodal with nothing in between the two modes (spikes) at each end. Excess kurtosis is minimum: the probability density "mass" is zero at the mean and it is concentrated at the two peaks at each end. Excess kurtosis reaches the minimum possible value (for any distribution) when the probability density function has two spikes at each end: it is bi-"peaky" with nothing in between them.

On the other hand, the plot shows that for extreme skewed cases, where the mean is located near one or the other end (

μ

= 0 or

μ

= 1), the variance is close to zero, and the excess kurtosis rapidly approaches infinity when the mean of the distribution approaches either end.

Alternatively, the excess kurtosis can also be expressed in terms of just the following two parameters: the square of the skewness, and the sample size ν as follows:

From this last expression, one can obtain the same limits published over a century ago by

Karl Pearson

[

21

]

for the beta distribution (see section below titled "Kurtosis bounded by the square of the skewness"). Setting

α

+

β

=

ν

= 0 in the above expression, one obtains Pearson's lower boundary (values for the skewness and excess kurtosis below the boundary (excess kurtosis + 2 − skewness

2

= 0) cannot occur for any distribution, and hence

Karl Pearson

appropriately called the region below this boundary the "impossible region"). The limit of

α

+

β

=

ν

→ ∞ determines Pearson's upper boundary.

therefore:

Values of

ν

=

α

+

β

such that

ν

ranges from zero to infinity, 0 <

ν

< ∞, span the whole region of the beta distribution in the plane of excess kurtosis versus squared skewness.

For the symmetric case (

α

=

β

), the following limits apply:

For the unsymmetric cases (

α

≠

β

) the following limits (with only the noted variable approaching the limit) can be obtained from the above expressions:

Characteristic function

[

edit

]

Re(characteristic function)

symmetric case

α

=

β

ranging from 25 to 0

Re(characteristic function)

symmetric case

α

=

β

ranging from 0 to 25

Re(characteristic function)

β

=

α

+ 1/2;

α

ranging from 25 to 0

Re(characteristic function)

α

=

β

+ 1/2;

β

ranging from 25 to 0

Re(characteristic function)

α

=

β

+ 1/2;

β

ranging from 0 to 25

The

characteristic function

is the

Fourier transform

of the probability density function. The characteristic function of the beta distribution is

Kummer's confluent hypergeometric function

(of the first kind):

[

1

]

[

18

]

[

22

]

where

is the

rising factorial

. The value of the characteristic function for

t

= 0, is one:

Also, the real and imaginary parts of the characteristic function enjoy the following symmetries with respect to the origin of variable

t

:

The symmetric case

α

=

β

simplifies the characteristic function of the beta distribution to a

Bessel function

, since in the special case

α

+

β

= 2

α

the

confluent hypergeometric function

(of the first kind) reduces to a

Bessel function

(the modified Bessel function of the first kind

) using

Kummer's

second transformation as follows:

In the accompanying plots, the

real part

(Re) of the

characteristic function

of the beta distribution is displayed for symmetric (

α

=

β

) and skewed (

α

≠

β

) cases.

Moment generating function

[

edit

]

It also follows

[

1

]

[

9

]

that the

moment generating function

is

In particular

M

X

(

α

;

β

; 0) = 1.

Using the

moment generating function

, the

k

-th

raw moment

is given by

[

1

]

the factor

multiplying the (exponential series) term

in the series of the

moment generating function

where (

x

)

(

k

)

is a

Pochhammer symbol

representing rising factorial. It can also be written in a recursive form as

Since the moment generating function

has a positive radius of convergence,

[

citation needed

]

the beta distribution is

determined by its moments

.

[

23

]

Moments of transformed random variables

[

edit

]

Moments of linearly transformed, product and inverted random variables

[

edit

]

One can also show the following expectations for a transformed random variable,

[

1

]

where the random variable

X

is Beta-distributed with parameters

α

and

β

:

X

~ Beta(

α

,

β

). The expected value of the variable 1 −

X

is the mirror-symmetry of the expected value based on

X

:

Due to the mirror-symmetry of the probability density function of the beta distribution, the variances based on variables

X

and 1 −

X

are identical, and the covariance on

X

(1 −

X

) is the negative of the variance:

These are the expected values for inverted variables, (these are related to the harmonic means, see

§ Harmonic mean

):

The following transformation by dividing the variable

X

by its mirror-image

X

/(1 −

X

)) results in the expected value of the "inverted beta distribution" or

beta prime distribution

(also known as beta distribution of the second kind or

Pearson's Type VI

):

[

1

]

Variances of these transformed variables can be obtained by integration, as the expected values of the second moments centered on the corresponding variables:

The following variance of the variable

X

divided by its mirror-image (

X

/(1−

X

) results in the variance of the "inverted beta distribution" or

beta prime distribution

(also known as beta distribution of the second kind or

Pearson's Type VI

):

[

1

]

The covariances are:

These expectations and variances appear in the four-parameter Fisher information matrix (

§ Fisher information

.)

Moments of logarithmically transformed random variables

[

edit

]

Plot of logit(

X

) = ln(

X

/(1 −

X

)) (vertical axis) vs.

X

in the domain of 0 to 1 (horizontal axis). Logit transformations are interesting, as they usually transform various shapes (including J-shapes) into (usually skewed) bell-shaped densities over the logit variable, and they may remove the end singularities over the original variable

Expected values for

logarithmic transformations

(useful for

maximum likelihood

estimates, see

§ Parameter estimation, Maximum likelihood

) are discussed in this section. The following logarithmic linear transformations are related to the geometric means

G

X

and

G

1−

X

(see

§ Geometric Mean

):

Where the

digamma function

ψ

(

α

) is defined as the

logarithmic derivative

of the

gamma function

:

[

18

]

Logit

transformations are interesting,

[

24

]

as they usually transform various shapes (including J-shapes) into (usually skewed) bell-shaped densities over the logit variable, and they may remove the end singularities over the original variable:

Johnson

[

25

]

considered the distribution of the

logit

– transformed variable ln(

X

/1 −

X

), including its moment generating function and approximations for large values of the shape parameters. This transformation extends the finite support [0, 1] based on the original variable

X

to infinite support in both directions of the real line (−∞, +∞). The logit of a beta variate has the

logistic-beta distribution

.

Higher order logarithmic moments can be derived by using the representation of a beta distribution as a proportion of two gamma distributions and differentiating through the integral. They can be expressed in terms of higher order poly-gamma functions as follows:

therefore the

variance

of the logarithmic variables and

covariance

of ln(

X

) and ln(1−

X

) are:

where the

trigamma function

, denoted

ψ

1

(

α

), is the second of the

polygamma functions

, and is defined as the derivative of the

digamma

function:

The variances and covariance of the logarithmically transformed variables

X

and (1 −

X

) are different, in general, because the logarithmic transformation destroys the mirror-symmetry of the original variables

X

and (1 −

X

), as the logarithm approaches negative infinity for the variable approaching zero.

These logarithmic variances and covariance are the elements of the

Fisher information

matrix for the beta distribution. They are also a measure of the curvature of the log likelihood function (see section on Maximum likelihood estimation).

The variances of the log inverse variables are identical to the variances of the log variables:

It also follows that the variances of the

logit

-transformed variables are

Quantities of information (entropy)

[

edit

]

Given a beta distributed random variable,

X

~ Beta(

α

,

β

), the

differential entropy

of

X

is (measured in

nats

),

[

26

]

the expected value of the negative of the logarithm of the

probability density function

:

where

f

(

x

;

α

,

β

) is the

probability density function

of the beta distribution:

The

digamma function

ψ

appears in the formula for the differential entropy as a consequence of Euler's integral formula for the

harmonic numbers

which follows from the integral:

The

differential entropy

of the beta distribution is negative for all values of

α

and

β

greater than zero, except at

α

=

β

= 1 (for which values the beta distribution is the same as the

uniform distribution

), where the

differential entropy

reaches its

maximum

value of zero. It is to be expected that the maximum entropy should take place when the beta distribution becomes equal to the uniform distribution, since uncertainty is maximal when all possible events are equiprobable.

For

α

or

β

approaching zero, the

differential entropy

approaches its

minimum

value of negative infinity. For (either or both)

α

or

β

approaching zero, there is a maximum amount of order: all the probability density is concentrated at the ends, and there is zero probability density at points located between the ends. Similarly for (either or both)

α

or

β

approaching infinity, the differential entropy approaches its minimum value of negative infinity, and a maximum amount of order. If either

α

or

β

approaches infinity (and the other is finite) all the probability density is concentrated at an end, and the probability density is zero everywhere else. If both shape parameters are equal (the symmetric case),

α

=

β

, and they approach infinity simultaneously, the probability density becomes a spike (

Dirac delta function

) concentrated at the middle

x

= 1/2, and hence there is 100% probability at the middle

x

= 1/2 and zero probability everywhere else.

The (continuous case)

differential entropy

was introduced by Shannon in his original paper (where he named it the "entropy of a continuous distribution"), as the concluding part of the same paper where he defined the

discrete entropy

.

[

27

]

It is known since then that the differential entropy may differ from the

infinitesimal

limit of the discrete entropy by an infinite offset, therefore the differential entropy can be negative (as it is for the beta distribution). What really matters is the relative value of entropy.

Given two beta distributed random variables,

X

1

~ Beta(

α

,

β

) and

X

2

~ Beta(

α

′

,

β

′

), the

cross-entropy

is (measured in nats)

[

28

]

The

cross entropy

has been used as an error metric to measure the distance between two hypotheses.

[

29

]

[

30

]

Its absolute value is minimum when the two distributions are identical. It is the information measure most closely related to the log maximum likelihood

[

28

]

(see section on "Parameter estimation. Maximum likelihood estimation")).

The relative entropy, or

Kullback–Leibler divergence

D

KL

(

X

1

||

X

2

), is a measure of the inefficiency of assuming that the distribution is

X

2

~ Beta(

α

′

,

β

′

) when the distribution is really

X

1

~ Beta(

α

,

β

). It is defined as follows (measured in nats).

The relative entropy, or

Kullback–Leibler divergence

, is always non-negative. A few numerical examples follow:

X

1

~ Beta(1, 1) and

X

2

~ Beta(3, 3);

D

KL

(

X

1

||

X

2

) = 0.598803;

D

KL

(

X

2

||

X

1

) = 0.267864;

h

(

X

1

) = 0;

h

(

X

2

) = −0.267864

X

1

~ Beta(3, 0.5) and

X

2

~ Beta(0.5, 3);

D

KL

(

X

1

||

X

2

) = 7.21574;

D

KL

(

X

2

||

X

1

) = 7.21574;

h

(

X

1

) = −1.10805;

h

(

X

2

) = −1.10805.

The

Kullback–Leibler divergence

is not symmetric

D

KL

(

X

1

||

X

2

) ≠

D

KL

(

X

2

||

X

1

) for the case in which the individual beta distributions Beta(1, 1) and Beta(3, 3) are symmetric, but have different entropies

h

(

X

1

) ≠

h

(

X

2

). The value of the Kullback divergence depends on the direction traveled: whether going from a higher (differential) entropy to a lower (differential) entropy or the other way around. In the numerical example above, the Kullback divergence measures the inefficiency of assuming that the distribution is (bell-shaped) Beta(3, 3), rather than (uniform) Beta(1, 1). The "h" entropy of Beta(1, 1) is higher than the "h" entropy of Beta(3, 3) because the uniform distribution Beta(1, 1) has a maximum amount of disorder. The Kullback divergence is more than two times higher (0.598803 instead of 0.267864) when measured in the direction of decreasing entropy: the direction that assumes that the (uniform) Beta(1, 1) distribution is (bell-shaped) Beta(3, 3) rather than the other way around. In this restricted sense, the Kullback divergence is consistent with the

second law of thermodynamics

.

The

Kullback–Leibler divergence

is symmetric

D

KL

(

X

1

||

X

2

) =

D

KL

(

X

2

||

X

1

) for the skewed cases Beta(3, 0.5) and Beta(0.5, 3) that have equal differential entropy

h

(

X

1

) =

h

(

X

2

).

The symmetry condition:

follows from the above definitions and the mirror-symmetry

f

(

x

;

α

,

β

) =

f

(1 −

x

;

α

,

β

) enjoyed by the beta distribution.

Relationships between statistical measures

[

edit

]

Mean, mode and median relationship

[

edit

]

If 1 <

α

<

β

then mode ≤ median ≤ mean.

[

10

]

Expressing the mode (only for

α

,

β

> 1), and the mean in terms of

α

and

β

:

If 1 <

β

<

α

then the order of the inequalities are reversed. For

α

,

β

> 1 the absolute distance between the mean and the median is less than 5% of the distance between the maximum and minimum values of

x

. On the other hand, the absolute distance between the mean and the mode can reach 50% of the distance between the maximum and minimum values of

x

, for the (

pathological

) case of

α

= 1 and

β

= 1, for which values the beta distribution approaches the uniform distribution and the

differential entropy

approaches its

maximum

value, and hence maximum "disorder".

For example, for

α

= 1.0001 and

β

= 1.00000001:

mode = 0.9999; PDF(mode) = 1.00010

mean = 0.500025; PDF(mean) = 1.00003

median = 0.500035; PDF(median) = 1.00003

mean − mode = −0.499875

mean − median = −9.65538 × 10

−6

where PDF stands for the value of the

probability density function

.

Mean, geometric mean and harmonic mean relationship

[

edit

]

:Mean, median, geometric mean and harmonic mean for beta distribution with 0 <

α

=

β

< 5

It is known from the

inequality of arithmetic and geometric means

that the geometric mean is lower than the mean. Similarly, the harmonic mean is lower than the geometric mean. The accompanying plot shows that for

α

=

β

, both the mean and the median are exactly equal to 1/2, regardless of the value of

α

=

β

, and the mode is also equal to 1/2 for

α

=

β

> 1, however the geometric and harmonic means are lower than 1/2 and they only approach this value asymptotically as

α

=

β

→ ∞.

Kurtosis bounded by the square of the skewness

[

edit

]

Beta distribution

α

and

β

parameters vs. excess kurtosis and squared skewness

As remarked by

Feller

,

[

5

]

in the

Pearson system

the beta probability density appears as

type I

(any difference between the beta distribution and Pearson's type I distribution is only superficial and it makes no difference for the following discussion regarding the relationship between kurtosis and skewness).

Karl Pearson

showed, in Plate 1 of his paper

[

21

]

published in 1916, a graph with the

kurtosis

as the vertical axis (

ordinate

) and the square of the

skewness

as the horizontal axis (

abscissa

), in which a number of distributions were displayed.

[

31

]

The region occupied by the beta distribution is bounded by the following two

lines

in the (skewness

2

,kurtosis)

plane

, or the (skewness

2

,excess kurtosis)

plane

:

or, equivalently,

At a time when there were no powerful digital computers,

Karl Pearson

accurately computed further boundaries,

[

32

]

[

21

]

for example, separating the "U-shaped" from the "J-shaped" distributions. The lower boundary line (excess kurtosis + 2 − skewness

2

= 0) is produced by skewed "U-shaped" beta distributions with both values of shape parameters

α

and

β

close to zero. The upper boundary line (excess kurtosis − (3/2) skewness

2

= 0) is produced by extremely skewed distributions with very large values of one of the parameters and very small values of the other parameter.

Karl Pearson

showed

[

21

]

that this upper boundary line (excess kurtosis − (3/2) skewness

2

= 0) is also the intersection with Pearson's distribution III, which has unlimited support in one direction (towards positive infinity), and can be bell-shaped or J-shaped. His son,

Egon Pearson

, showed

[

31

]

that the region (in the kurtosis/squared-skewness plane) occupied by the beta distribution (equivalently, Pearson's distribution I) as it approaches this boundary (excess kurtosis − (3/2) skewness

2

= 0) is shared with the

noncentral chi-squared distribution

. Karl Pearson

[

33

]

(Pearson 1895, pp. 357, 360, 373–376) also showed that the

gamma distribution

is a Pearson type III distribution. Hence this boundary line for Pearson's type III distribution is known as the gamma line. (This can be shown from the fact that the excess kurtosis of the gamma distribution is 6/

k

and the square of the skewness is 4/

k

, hence (excess kurtosis − (3/2) skewness

2

= 0) is identically satisfied by the gamma distribution regardless of the value of the parameter "k"). Pearson later noted that the

chi-squared distribution

is a special case of Pearson's type III and also shares this boundary line (as it is apparent from the fact that for the

chi-squared distribution

the excess kurtosis is 12/

k

and the square of the skewness is 8/

k

, hence (excess kurtosis − (3/2) skewness

2

= 0) is identically satisfied regardless of the value of the parameter "k"). This is to be expected, since the chi-squared distribution

X

~ χ

2

(

k

) is a special case of the gamma distribution, with parametrization X ~ Γ(k/2, 1/2) where k is a positive integer that specifies the "number of degrees of freedom" of the chi-squared distribution.

An example of a beta distribution near the upper boundary (excess kurtosis − (3/2) skewness

2

= 0) is given by α = 0.1, β = 1000, for which the ratio (excess kurtosis)/(skewness

2

) = 1.49835 approaches the upper limit of 1.5 from below. An example of a beta distribution near the lower boundary (excess kurtosis + 2 − skewness

2

= 0) is given by α= 0.0001, β = 0.1, for which values the expression (excess kurtosis + 2)/(skewness

2

) = 1.01621 approaches the lower limit of 1 from above. In the infinitesimal limit for both

α

and

β

approaching zero symmetrically, the excess kurtosis reaches its minimum value at −2. This minimum value occurs at the point at which the lower boundary line intersects the vertical axis (

ordinate

). (However, in Pearson's original chart, the ordinate is kurtosis, instead of excess kurtosis, and it increases downwards rather than upwards).

Values for the skewness and excess kurtosis below the lower boundary (excess kurtosis + 2 − skewness

2

= 0) cannot occur for any distribution, and hence

Karl Pearson

appropriately called the region below this boundary the "impossible region". The boundary for this "impossible region" is determined by (symmetric or skewed) bimodal U-shaped distributions for which the parameters

α

and

β

approach zero and hence all the probability density is concentrated at the ends:

x

= 0, 1 with practically nothing in between them. Since for

α

≈

β

≈ 0 the probability density is concentrated at the two ends

x

= 0 and

x

= 1, this "impossible boundary" is determined by a

Bernoulli distribution

, where the two only possible outcomes occur with respective probabilities

p

and

q

= 1 −

p

. For cases approaching this limit boundary with symmetry

α

=

β

, skewness ≈ 0, excess kurtosis ≈ −2 (this is the lowest excess kurtosis possible for any distribution), and the probabilities are

p

≈

q

≈ 1/2. For cases approaching this limit boundary with skewness, excess kurtosis ≈ −2 + skewness

2

, and the probability density is concentrated more at one end than the other end (with practically nothing in between), with probabilities

at the left end

x

= 0 and

at the right end

x

= 1.

All statements are conditional on

α

,

β

> 0:

Geometry of the probability density function

[

edit

]

Inflection point location versus α and β showing regions with one inflection point

Inflection point location versus α and β showing region with two inflection points

For certain values of the shape parameters α and β, the

probability density function

has

inflection points

, at which the

curvature

changes sign. The position of these inflection points can be useful as a measure of the

dispersion

or spread of the distribution.

Defining the following quantity:

Points of inflection occur,

[

1

]

[

8

]

[

9

]

[

20

]

depending on the value of the shape parameters

α

and

β

, as follows:

(

α

> 2,

β

> 2) The distribution is bell-shaped (symmetric for

α

=

β

and skewed otherwise), with

two inflection points

, equidistant from the mode:

(

α

= 2,

β

> 2) The distribution is unimodal, positively skewed, right-tailed, with

one inflection point

, located to the right of the mode:

(

α

> 2, β = 2) The distribution is unimodal, negatively skewed, left-tailed, with

one inflection point

, located to the left of the mode:

(1 <

α

< 2, β > 2,

α

+

β

> 2) The distribution is unimodal, positively skewed, right-tailed, with

one inflection point

, located to the right of the mode:

(0 <

α

< 1, 1 <

β

< 2) The distribution has a mode at the left end

x

= 0 and it is positively skewed, right-tailed. There is

one inflection point

, located to the right of the mode:

(

α

> 2, 1 <

β

< 2) The distribution is unimodal negatively skewed, left-tailed, with

one inflection point

, located to the left of the mode:

(1 <

α

< 2, 0 <

β

< 1) The distribution has a mode at the right end

x

= 1 and it is negatively skewed, left-tailed. There is

one inflection point

, located to the left of the mode:

There are no inflection points in the remaining (symmetric and skewed) regions: U-shaped: (

α

,

β

< 1) upside-down-U-shaped: (1 <

α

< 2, 1 <

β

< 2), reverse-J-shaped (

α

< 1,

β

> 2) or J-shaped: (

α

> 2,

β

< 1)

The accompanying plots show the inflection point locations (shown vertically, ranging from 0 to 1) versus

α

and

β

(the horizontal axes ranging from 0 to 5). There are large cuts at surfaces intersecting the lines

α

= 1,

β

= 1,

α

= 2, and

β

= 2 because at these values the beta distribution change from 2 modes, to 1 mode to no mode.

PDF for symmetric beta distribution vs.

x

and

α

=

β

from 0 to 30

PDF for symmetric beta distribution vs. x and

α

=

β

from 0 to 2

PDF for skewed beta distribution vs.

x

and

β

= 2.5

α

from 0 to 9

PDF for skewed beta distribution vs. x and

β

= 5.5

α

from 0 to 9

PDF for skewed beta distribution vs. x and

β

= 8

α

from 0 to 10

The beta density function can take a wide variety of different shapes depending on the values of the two parameters

α

and

β

. The ability of the beta distribution to take this great diversity of shapes (using only two parameters) is partly responsible for finding wide application for modeling actual measurements:

the density function is

symmetric

about 1/2 (blue & teal plots).

median = mean = 1/2.

skewness = 0.

variance = 1/(4(2

α

+ 1))

α

=

β

< 1

U-shaped (blue plot).

bimodal: left mode = 0, right mode =1, anti-mode = 1/2

1/12 < var(

X

) < 1/4

[

1

]

−2 < excess kurtosis(

X

) < −6/5

α

=

β

= 1/2 is the

arcsine distribution

var(

X

) = 1/8

excess kurtosis(

X

) = −3/2

CF = Rinc (t)

[

34

]

α

=

β

→ 0 is a 2-point

Bernoulli distribution

with equal probability 1/2 at each

Dirac delta function

end

x

= 0 and

x

= 1 and zero probability everywhere else. A coin toss: one face of the coin being

x

= 0 and the other face being

x

= 1.

α = β = 1

the

uniform [0, 1] distribution

no mode

var(

X

) = 1/12

excess kurtosis(

X

) = −6/5

The (negative anywhere else)

differential entropy

reaches its

maximum

value of zero

CF = Sinc (t)

α

=

β

> 1

symmetric

unimodal

mode = 1/2.

0 < var(

X

) < 1/12

[

1

]

−6/5 < excess kurtosis(

X

) < 0

α

=

β

= 3/2 is a semi-elliptic [0, 1] distribution, see:

Wigner semicircle distribution

[

35

]

var(

X

) = 1/16.

excess kurtosis(

X

) = −1

CF = 2 Jinc (t)

α

=

β

= 2 is the parabolic [0, 1] distribution

var(

X

) = 1/20

excess kurtosis(

X

) = −6/7

CF = 3 Tinc (t)

[

36

]

α

=

β

> 2 is bell-shaped, with

inflection points

located to either side of the mode

0 < var(

X

) < 1/20

−6/7 < excess kurtosis(

X

) < 0

α

=

β

→ ∞ is a 1-point

Degenerate distribution

with a

Dirac delta function

spike at the midpoint

x

= 1/2 with probability 1, and zero probability everywhere else. There is 100% probability (absolute certainty) concentrated at the single point

x

= 1/2.

The density function is

skewed

. An interchange of parameter values yields the

mirror image

(the reverse) of the initial curve, some more specific cases:

α

< 1,

β

< 1

U-shaped

Positive skew for

α

<

β

, negative skew for

α

>

β

.

bimodal: left mode = 0, right mode = 1, anti-mode =

0 < median < 1.

0 < var(

X

) < 1/4

α

> 1,

β

> 1

unimodal

(magenta & cyan plots),

Positive skew for

α

<

β

, negative skew for

α

>

β

.

0 < median < 1

0 < var(

X

) < 1/12

α

< 1,

β

≥ 1

α

≥ 1,

β

< 1

α

= 1,

β

> 1

α > 1, β = 1

If

X

~ Beta(

α

,

β

) then 1 −

X

~ Beta(

β

,

α

)

mirror-image

symmetry

If

X

~ Beta(

α

,

β

) then

. The

beta prime distribution

, also called "beta distribution of the second kind".

If

, then

has a

generalized logistic distribution

, with density

, where

is the

logistic sigmoid

.

If

X

~ Beta(

α

,

β

) then

.

If

and

then

has density

for

and

for

, where

is the

Hypergeometric function

.

[

37

]

If

X

~ Beta(

n

/2,

m

/2) then

(assuming

n

> 0 and

m

> 0), the

Fisher–Snedecor F distribution

.

If

then min +

X

(max − min) ~ PERT(min, max,

m

,

λ

) where

PERT

denotes a

PERT distribution

used in

PERT

analysis, and

m

=most likely value.

[

38

]

Traditionally

[

39

]

λ

= 4 in PERT analysis.

If

X

~ Beta(1,

β

) then

X

~

Kumaraswamy distribution

with parameters (1,

β

)

If

X

~ Beta(

α

, 1) then

X

~

Kumaraswamy distribution

with parameters (

α

, 1)

If

X

~ Beta(

α

, 1) then −ln(

X

) ~ Exponential(

α

)

Special and limiting cases

[

edit

]

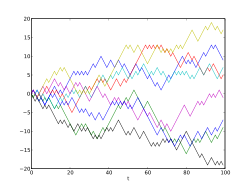

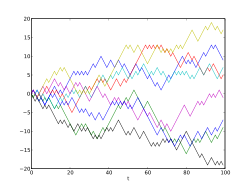

Example of eight realizations of a random walk in one dimension starting at 0: the probability for the time of the last visit to the origin is distributed as Beta(1/2, 1/2)

Beta(1/2, 1/2): The

arcsine distribution

probability density was proposed by

Harold Jeffreys

to represent uncertainty for a

Bernoulli

or a

binomial distribution

in

Bayesian inference

, and is now commonly referred to as

Jeffreys prior

:

p

−1/2

(1 −

p

)

−1/2

. This distribution also appears in several

random walk

fundamental theorems

Beta(1, 1) ~

U(0, 1)

with density 1 on that interval.

Beta(n, 1) ~ Maximum of

n

independent rvs. with

U(0, 1)

, sometimes called a

a standard power function distribution

with density

n

x

n

–1

on that interval.

Beta(1, n) ~ Minimum of

n

independent rvs. with

U(0, 1)

with density

n

(1 −

x

)

n

−1

on that interval.

If

X

~ Beta(3/2, 3/2) and

r

> 0 then 2

rX

−

r

~

Wigner semicircle distribution

.

Beta(1/2, 1/2) is equivalent to the

arcsine distribution

. This distribution is also

Jeffreys prior

probability for the

Bernoulli

and

binomial distributions

.

the

exponential distribution

.

the

gamma distribution

.

For large

,

the

normal distribution

. More precisely, if

then