ℹ️ Skipped - page is already crawled

| Filter | Status | Condition | Details |

|---|---|---|---|

| HTTP status | PASS | download_http_code = 200 | HTTP 200 |

| Age cutoff | PASS | download_stamp > now() - 6 MONTH | 0.7 months ago |

| History drop | PASS | isNull(history_drop_reason) | No drop reason |

| Spam/ban | PASS | fh_dont_index != 1 AND ml_spam_score = 0 | ml_spam_score=0 |

| Canonical | PASS | meta_canonical IS NULL OR = '' OR = src_unparsed | Not set |

| Property | Value |

|---|---|

| URL | https://brilliant.org/wiki/transience-and-recurrence/ |

| Last Crawled | 2026-03-30 04:07:08 (20 days ago) |

| First Indexed | 2016-06-11 04:21:21 (9 years ago) |

| HTTP Status Code | 200 |

| Meta Title | Transience and Recurrence of Markov Chains | Brilliant Math & Science Wiki |

| Meta Description | A stochastic process contains states that may be either transient or recurrent; transience and recurrence describe the likelihood of a process beginning in some state of returning to that particular state. There is some possibility (a nonzero probability) that a process beginning in a transient state will never return to that state. There is a guarantee that a process beginning in a recurrent state will return to that state. Transience and recurrence issues are central … |

| Meta Canonical | null |

| Boilerpipe Text | Henry Maltby

contributed

Intuitively, transience attempts to capture how "connected" a state is to the entirety of the Markov chain. If there is a possibility of leaving the state and never returning, then the state is not very connected at all, so it is known as transient. In addition to the traditional definition regarding probability of return, there are other equivalent definitions of transient states.

Let \(\{X_0, \, X_1, \, \dots\}\) be a Markov chain with state space \(S\). The following conditions are equivalent.

A state \(i \in S\) is transient.

Let \(T_i = \text{min}\{n \ge 1 \mid X_n = i\}\) . Then, \( \mathbb{P}(T_i < \infty \mid X_0 = i) < 1.\)

The following sum converges: \( \displaystyle\sum_{n = 1}^\infty \mathbb{P}(X_n = i \mid X_0 = i) < \infty.\)

\( \mathbb{P}(X_n = i \text{ for infinitely many } n \mid X_0 = i) = 0.\)

Similarly, a classification exists for recurrent states as well.

Let \(\{X_0, \, X_1, \, \dots\}\) be a Markov chain with state space \(S\). The following conditions are equivalent.

A state \(i \in S\) is recurrent.

Let \(T_i = \text{min}\{n \ge 1 \mid X_n = i\}\) . Then, \( \mathbb{P}(T_i < \infty \mid X_0 = i) =1.\)

The following sum diverges: \( \displaystyle\sum_{n = 1}^\infty \mathbb{P}(X_n = i \mid X_0 = i) = \infty.\)

\( \mathbb{P}(X_n = i \text{ for infinitely many } n \mid X_0 = i) = 1.\)

An aperiodic Markov chain with positive recurrent states

While a recurrent state has the property that the Markov chain is expected to return to the state an infinite number of times, the Markov chain is not necessarily expected to return even once within a finite number of steps. This is not good, as a lot of the intuition for recurrence comes from assuming that it will. In order to fix that, there is a further classification of recurrence states.

Let \(T_i = \text{min}\{n \ge 1 \mid X_n = i\}\) be the time of the first return to \(i\). Then, the expected value \(\mathbb{E}(T_i \mid X_0 = i)\) is the property discussed above.

A state \(i\) is known as

positive recurrent

if \(\mathbb{E}(T_i \mid X_0 = i) < \infty\).

A state \(i\) is known as

null recurrent

if \(\mathbb{E}(T_i \mid X_0 = i) = \infty\).

If a state is periodic, it is positive recurrent. However, as shown to the right, there exist plenty of aperiodic Markov chains with only positive recurrent states.

The following is a depiction of the Markov chain known as a

random walk

with reflection at zero.

\(p + q = 1\)

With \(p < \tfrac{1}{2}\), all states in the Markov chain are positive recurrent.

With \(p = \tfrac{1}{2}\), all states in the Markov chain are null recurrent.

With \(p > \tfrac{1}{2}\), all states in the Markov chain are transient.

Stationary Distributions

Markov Chains

Absorbing Markov Chains |

| Markdown | [](https://brilliant.org/)

[Home](https://brilliant.org/home/)

[Courses](https://brilliant.org/courses/)

[Sign up](https://brilliant.org/account/signup/?next=/wiki/transience-and-recurrence/) [Log in](https://brilliant.org/account/login/?next=/wiki/transience-and-recurrence/)

The best way to learn math and computer science.

[Log in with Google](https://brilliant.org/account/google/login/?next=/wiki/transience-and-recurrence/)

[Log in with Facebook](https://brilliant.org/account/facebook/login/?next=/wiki/transience-and-recurrence/)

[Log in with email](https://brilliant.org/account/login/?next=/wiki/transience-and-recurrence/)

[Join using Google](https://brilliant.org/account/google/login/?next=/wiki/transience-and-recurrence/) [Join using email](https://brilliant.org/account/signup/?signup=true&next=/wiki/transience-and-recurrence/)

[Reset password](https://brilliant.org/account/password/reset/) New user? [Sign up](https://brilliant.org/account/signup/?signup=true&next=/wiki/transience-and-recurrence/)

Existing user? [Log in](https://brilliant.org/account/login/?next=/wiki/transience-and-recurrence/)

# Transience and Recurrence of Markov Chains

[Sign up with Facebook](https://brilliant.org/account/facebook/login/?next=/wiki/transience-and-recurrence/) or [Sign up manually](https://brilliant.org/account/signup/?signup=true&next=/wiki/transience-and-recurrence/)

Already have an account? [Log in here.](https://brilliant.org/account/login/?next=/wiki/transience-and-recurrence/)

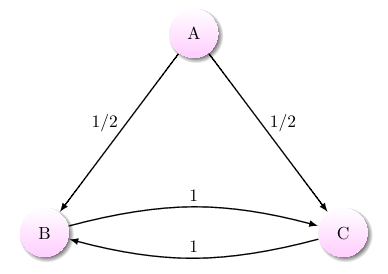

**Henry Maltby** contributed

A Markov chain with one transient state and two recurrent states A [stochastic process](https://brilliant.org/wiki/stochastic-processes/ "stochastic process") contains states that may be either transient or recurrent; transience and recurrence describe the likelihood of a process beginning in some state of returning to that particular state. There is some possibility (a nonzero probability) that a process beginning in a **transient** state *will never* return to that state. There is a guarantee that a process beginning in a **recurrent** state *will* return to that state.

Transience and recurrence issues are central to the study of [Markov chains](https://brilliant.org/wiki/markov-chains/ "Markov chains") and help describe the Markov chain's overall structure. The presence of many transient states may suggest that the Markov chain is [absorbing](https://brilliant.org/wiki/absorbing-markov-chains/ "absorbing"), and a [strong form](https://brilliant.org/wiki/transience-and-recurrence/#positive-vs-null-recurrent) of recurrence is necessary in an [ergodic Markov chain](https://brilliant.org/wiki/ergodic-markov-chains/ "ergodic Markov chain").

> In a Markov chain, there is probability \\(1\\) of eventually (after some number of steps) returning to state \\(x\\). Must the expected number of returns to state \\(x\\) be infinite?

>

> Yes\!

> In a Markov chain, there is probability \\(1\\) of eventually (after some number of steps) returning to state \\(x\\). Must the expected number of steps to return to state \\(x\\) be finite?

>

> No\!

#### Contents

- [Equivalent Definitions](https://brilliant.org/wiki/transience-and-recurrence/#equivalent-definitions)

- [Positive vs Null Recurrent](https://brilliant.org/wiki/transience-and-recurrence/#positive-vs-null-recurrent)

- [See Also](https://brilliant.org/wiki/transience-and-recurrence/#see-also)

## Equivalent Definitions

Intuitively, transience attempts to capture how "connected" a state is to the entirety of the Markov chain. If there is a possibility of leaving the state and never returning, then the state is not very connected at all, so it is known as transient. In addition to the traditional definition regarding probability of return, there are other equivalent definitions of transient states.

> Let \\(\\{X\_0, \\, X\_1, \\, \\dots\\}\\) be a Markov chain with state space \\(S\\). The following conditions are equivalent.

>

> 1. A state \\(i \\in S\\) is transient.

> 2. Let \\(T\_i = \\text{min}\\{n \\ge 1 \\mid X\_n = i\\}\\) . Then, \\( \\mathbb{P}(T\_i \< \\infty \\mid X\_0 = i) \< 1.\\)

> 3. The following sum converges: \\( \\displaystyle\\sum\_{n = 1}^\\infty \\mathbb{P}(X\_n = i \\mid X\_0 = i) \< \\infty.\\)

> 4. \\( \\mathbb{P}(X\_n = i \\text{ for infinitely many } n \\mid X\_0 = i) = 0.\\)

Similarly, a classification exists for recurrent states as well.

> Let \\(\\{X\_0, \\, X\_1, \\, \\dots\\}\\) be a Markov chain with state space \\(S\\). The following conditions are equivalent.

>

> 1. A state \\(i \\in S\\) is recurrent.

> 2. Let \\(T\_i = \\text{min}\\{n \\ge 1 \\mid X\_n = i\\}\\) . Then, \\( \\mathbb{P}(T\_i \< \\infty \\mid X\_0 = i) =1.\\)

> 3. The following sum diverges: \\( \\displaystyle\\sum\_{n = 1}^\\infty \\mathbb{P}(X\_n = i \\mid X\_0 = i) = \\infty.\\)

> 4. \\( \\mathbb{P}(X\_n = i \\text{ for infinitely many } n \\mid X\_0 = i) = 1.\\)

## Positive vs Null Recurrent

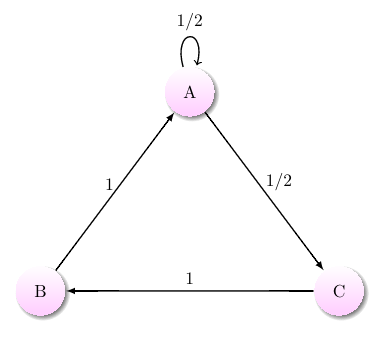

An aperiodic Markov chain with positive recurrent states

While a recurrent state has the property that the Markov chain is expected to return to the state an infinite number of times, the Markov chain is not necessarily expected to return even once within a finite number of steps. This is not good, as a lot of the intuition for recurrence comes from assuming that it will. In order to fix that, there is a further classification of recurrence states.

> Let \\(T\_i = \\text{min}\\{n \\ge 1 \\mid X\_n = i\\}\\) be the time of the first return to \\(i\\). Then, the expected value \\(\\mathbb{E}(T\_i \\mid X\_0 = i)\\) is the property discussed above.

>

> - A state \\(i\\) is known as **positive recurrent** if \\(\\mathbb{E}(T\_i \\mid X\_0 = i) \< \\infty\\).

> - A state \\(i\\) is known as **null recurrent** if \\(\\mathbb{E}(T\_i \\mid X\_0 = i) = \\infty\\).

If a state is periodic, it is positive recurrent. However, as shown to the right, there exist plenty of aperiodic Markov chains with only positive recurrent states.

> The following is a depiction of the Markov chain known as a [random walk](https://brilliant.org/wiki/random-walk/ "random walk") with reflection at zero.  \\(p + q = 1\\)

>

> With \\(p \< \\tfrac{1}{2}\\), all states in the Markov chain are positive recurrent. With \\(p = \\tfrac{1}{2}\\), all states in the Markov chain are null recurrent. With \\(p \> \\tfrac{1}{2}\\), all states in the Markov chain are transient.

## See Also

- [Stationary Distributions](https://brilliant.org/wiki/stationary-distributions/ "Stationary Distributions")

- [Markov Chains](https://brilliant.org/wiki/markov-chain/ "Markov Chains")

- [Absorbing Markov Chains](https://brilliant.org/wiki/absorbing-markov-chains/ "Absorbing Markov Chains")

**Cite as:** Transience and Recurrence of Markov Chains. *Brilliant.org*. Retrieved from <https://brilliant.org/wiki/transience-and-recurrence/>

[**You're viewing an archive of Brilliant's Wiki, which is no longer maintained.** For the best place to learn math, programming, data, and more, check out Brilliant's interactive courses.](https://brilliant.org/account/signup/?next=/wiki/transience-and-recurrence/)

Sign up to read all wikis and quizzes in math, science, and engineering topics.

[Log in with Google](https://brilliant.org/account/google/login/?next=/wiki/transience-and-recurrence/)

[Log in with Facebook](https://brilliant.org/account/facebook/login/?next=/wiki/transience-and-recurrence/)

[Log in with email](https://brilliant.org/account/login/?next=/wiki/transience-and-recurrence/)

[Join using Google](https://brilliant.org/account/google/login/?next=/wiki/transience-and-recurrence/) [Join using email](https://brilliant.org/account/signup/?signup=true&next=/wiki/transience-and-recurrence/)

[Reset password](https://brilliant.org/account/password/reset/) New user? [Sign up](https://brilliant.org/account/signup/?signup=true&next=/wiki/transience-and-recurrence/)

Existing user? [Log in](https://brilliant.org/account/login/?next=/wiki/transience-and-recurrence/) |

| Readable Markdown | **Henry Maltby** contributed

Intuitively, transience attempts to capture how "connected" a state is to the entirety of the Markov chain. If there is a possibility of leaving the state and never returning, then the state is not very connected at all, so it is known as transient. In addition to the traditional definition regarding probability of return, there are other equivalent definitions of transient states.

> Let \\(\\{X\_0, \\, X\_1, \\, \\dots\\}\\) be a Markov chain with state space \\(S\\). The following conditions are equivalent.

>

> 1. A state \\(i \\in S\\) is transient.

> 2. Let \\(T\_i = \\text{min}\\{n \\ge 1 \\mid X\_n = i\\}\\) . Then, \\( \\mathbb{P}(T\_i \< \\infty \\mid X\_0 = i) \< 1.\\)

> 3. The following sum converges: \\( \\displaystyle\\sum\_{n = 1}^\\infty \\mathbb{P}(X\_n = i \\mid X\_0 = i) \< \\infty.\\)

> 4. \\( \\mathbb{P}(X\_n = i \\text{ for infinitely many } n \\mid X\_0 = i) = 0.\\)

Similarly, a classification exists for recurrent states as well.

> Let \\(\\{X\_0, \\, X\_1, \\, \\dots\\}\\) be a Markov chain with state space \\(S\\). The following conditions are equivalent.

>

> 1. A state \\(i \\in S\\) is recurrent.

> 2. Let \\(T\_i = \\text{min}\\{n \\ge 1 \\mid X\_n = i\\}\\) . Then, \\( \\mathbb{P}(T\_i \< \\infty \\mid X\_0 = i) =1.\\)

> 3. The following sum diverges: \\( \\displaystyle\\sum\_{n = 1}^\\infty \\mathbb{P}(X\_n = i \\mid X\_0 = i) = \\infty.\\)

> 4. \\( \\mathbb{P}(X\_n = i \\text{ for infinitely many } n \\mid X\_0 = i) = 1.\\)

An aperiodic Markov chain with positive recurrent states

While a recurrent state has the property that the Markov chain is expected to return to the state an infinite number of times, the Markov chain is not necessarily expected to return even once within a finite number of steps. This is not good, as a lot of the intuition for recurrence comes from assuming that it will. In order to fix that, there is a further classification of recurrence states.

> Let \\(T\_i = \\text{min}\\{n \\ge 1 \\mid X\_n = i\\}\\) be the time of the first return to \\(i\\). Then, the expected value \\(\\mathbb{E}(T\_i \\mid X\_0 = i)\\) is the property discussed above.

>

> - A state \\(i\\) is known as **positive recurrent** if \\(\\mathbb{E}(T\_i \\mid X\_0 = i) \< \\infty\\).

> - A state \\(i\\) is known as **null recurrent** if \\(\\mathbb{E}(T\_i \\mid X\_0 = i) = \\infty\\).

If a state is periodic, it is positive recurrent. However, as shown to the right, there exist plenty of aperiodic Markov chains with only positive recurrent states.

> The following is a depiction of the Markov chain known as a [random walk](https://brilliant.org/wiki/random-walk/ "random walk") with reflection at zero.  \\(p + q = 1\\)

>

> With \\(p \< \\tfrac{1}{2}\\), all states in the Markov chain are positive recurrent. With \\(p = \\tfrac{1}{2}\\), all states in the Markov chain are null recurrent. With \\(p \> \\tfrac{1}{2}\\), all states in the Markov chain are transient.

- [Stationary Distributions](https://brilliant.org/wiki/stationary-distributions/ "Stationary Distributions")

- [Markov Chains](https://brilliant.org/wiki/markov-chain/ "Markov Chains")

- [Absorbing Markov Chains](https://brilliant.org/wiki/absorbing-markov-chains/ "Absorbing Markov Chains") |

| Shard | 152 (laksa) |

| Root Hash | 15741013377293584752 |

| Unparsed URL | org,brilliant!/wiki/transience-and-recurrence/ s443 |